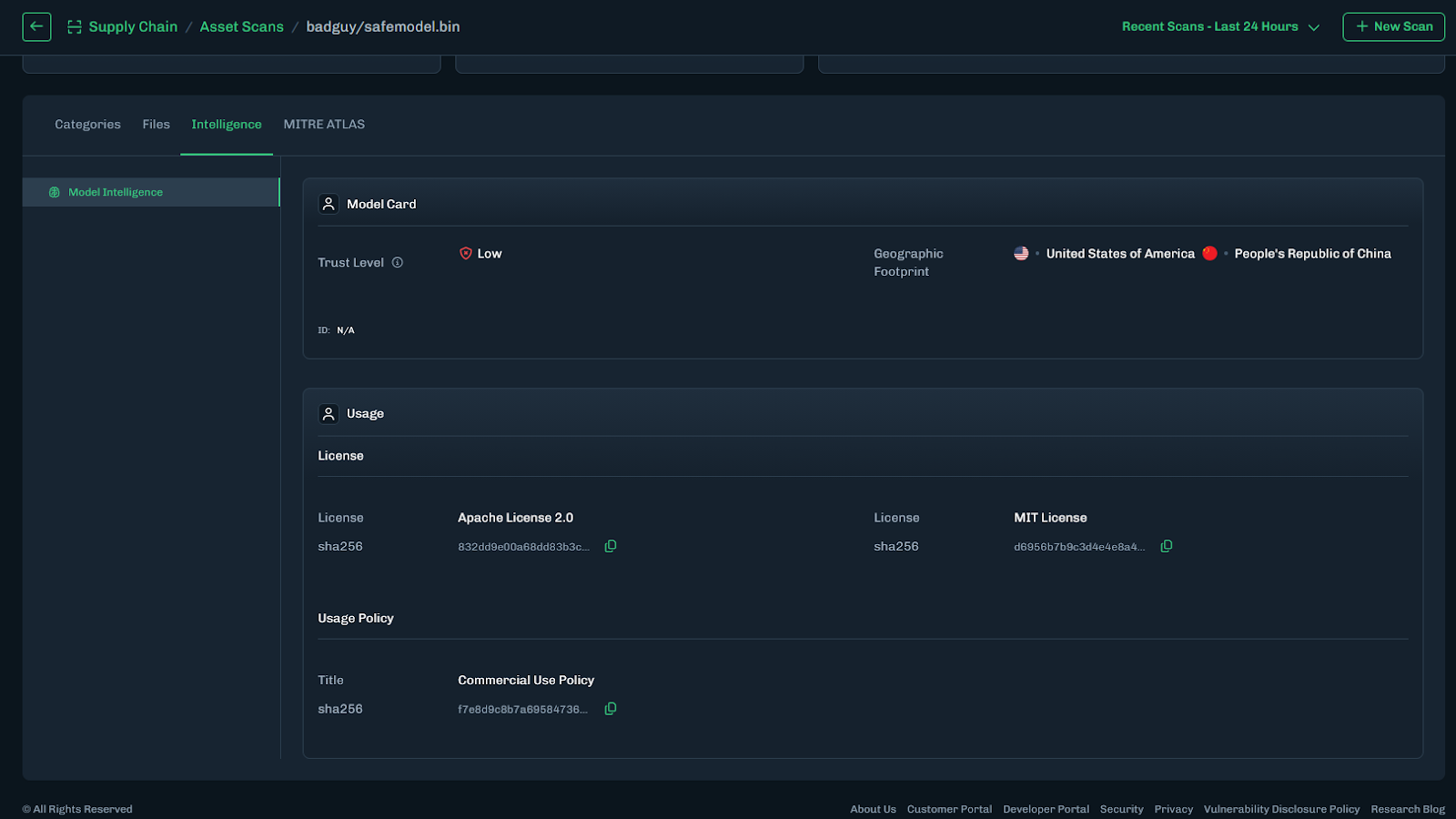

Model Intelligence

February 24, 2026

From Blind Model Adoption to Informed AI Deployment

As organizations accelerate AI adoption, they increasingly rely on third-party and open-source models to drive new capabilities across their business. Frequently, these models arrive with limited or nonexistent metadata around licensing, geographic exposure, and risk posture. The result is blind deployment decisions that introduce legal, financial, and reputational risk. HiddenLayer’s Model Intelligence eliminates that uncertainty by delivering structured insight and risk transparency into the models your organization depends on.

Three Core Attributes of Model Intelligence

HiddenLayer’s Model Intelligence focuses on three core attributes that enable risk aware deployment decisions:

License

Licenses define how a model can be used, modified, and shared. Some, such as MIT Open Source or Apache 2.0, are permissive. Others impose commercial, attribution, or use-case restrictions.

Identifying license terms early ensures models are used within approved boundaries and aligned with internal governance policies and regulatory requirements.

For example, a development team integrates a high-performing open-source model into a revenue-generating product, only to later discover the license restricts commercial use or imposes field-of-use limitations. What initially accelerated development quickly turns into a legal review, customer disruption, and a costly product delay.

Geographic Footprint

A model’s geographic footprint reflects the countries where it has been discovered across global repositories. This provides visibility into where the model is circulating, hosted, or redistributed.

Understanding this footprint helps organizations assess geopolitical, intellectual property, and security risks tied to jurisdiction and potential exposure before deployment.

For example, a model widely mirrored across repositories in sanctioned or high-risk jurisdictions may introduce export control considerations, sanctions exposure, or heightened compliance scrutiny, particularly for organizations operating in regulated industries such as financial services or defense.

Trust Level

Trust Level provides a measurable indicator of how established and credible a model’s publisher is on the hosting platform.

For example, two models may offer comparable performance. One is published by an established organization with a history of maintained releases, version control, and transparent documentation. The other is released by a little-known publisher with limited history or observable track record. Without visibility into publisher credibility, teams may unknowingly introduce unnecessary supply chain risk.

This enables teams to prioritize review efforts: applying deeper scrutiny to lower-trust sources while reducing friction for higher-trust ones. When combined with license and geographic context, trust becomes a powerful input for supply chain governance and compliance decisions.

Turning Intelligence into Operational Action

Model Intelligence operationalizes these data points across the model lifecycle through the following capabilities:

- Automated Metadata Detection – Identifies license and geographic footprint during scanning.

- Trust Level Scoring – Assesses publisher credibility to inform risk prioritization.

- AIBOM Integration – Embeds metadata into a structured inventory of model components, datasets, and dependencies to support licensing reviews and compliance workflows.

This transforms fragmented metadata into structured, actionable intelligence across the model lifecycle.

What This Means for Your Organization

Model Intelligence enables organizations to vet models quickly and confidently, eliminating manual guesswork and fragmented research. It provides clear visibility into licensing terms and geographic exposure, helping teams understand usage rights before deployment. By embedding this insight into governance workflows, it strengthens alignment with internal policies and regulatory requirements while reducing the risk of deploying improperly licensed or high-risk models. The result is faster, responsible AI adoption without increasing organizational risk.

.svg)

Thanks for joining us!

will be on the way soon.