AI Guardrails for Safe and Compliant AI

Enforce safety, compliance, and policy boundaries across LLMs, agents, and AI applications.

Trusted by Industry Leaders

AI outputs must stay safe, consistent, and policy aligned

Enterprises need predictable and compliant AI behavior. Without guardrails, LLMs and agents can generate harmful content, leak sensitive data, violate policy, or behave inconsistently across applications.

AI systems need standardized behavioral controls

Teams struggle to enforce consistent rules for tone, safety, compliance, and restricted topics across departments and applications.

LLM outputs can unintentionally reveal sensitive data

Without safety filters and real time checks, generative systems may produce PII, PHI, or proprietary information.

Guardrails that enforce safe use of AI at enterprise-scale

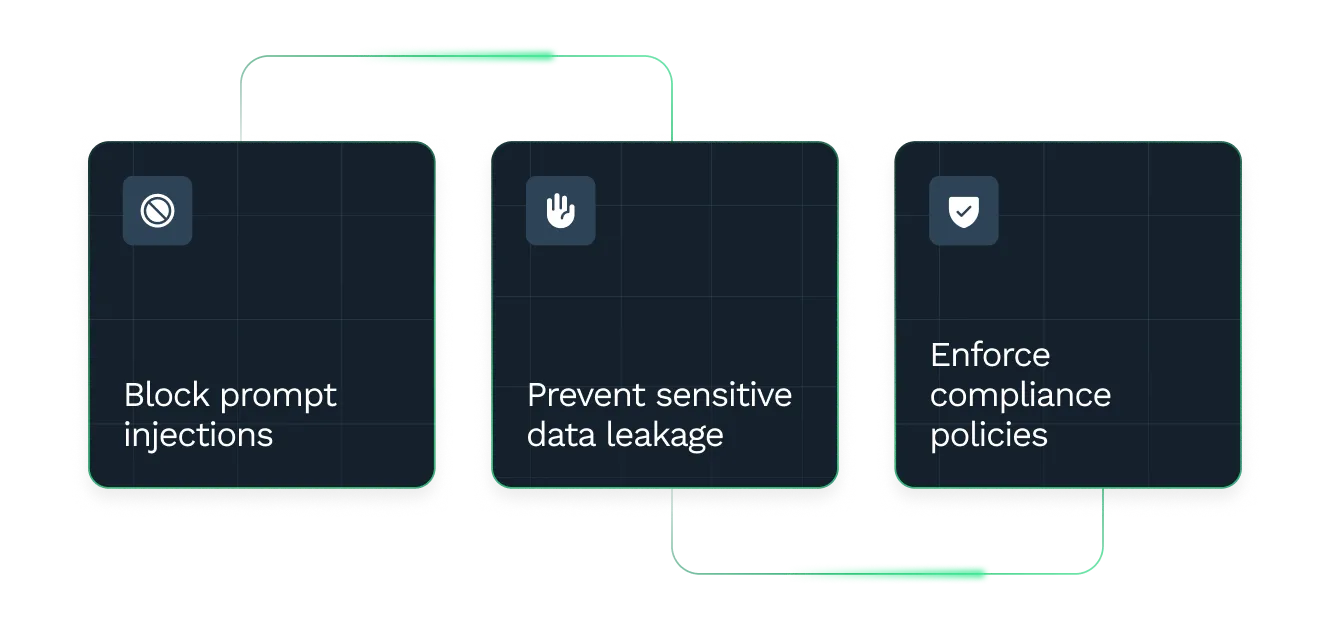

Output Safety and Content Controls

Enforce boundaries around content, toxicity, bias, and regulatory restrictions.

Policy Driven Response Shaping

Ensure responses comply with security policies, including protection against prompt injection, data leakage, and sensitive information exposure.

Sensitive Data Protection

Detect and prevent PII, PHI, and proprietary data exposure in generated content.

.webp)

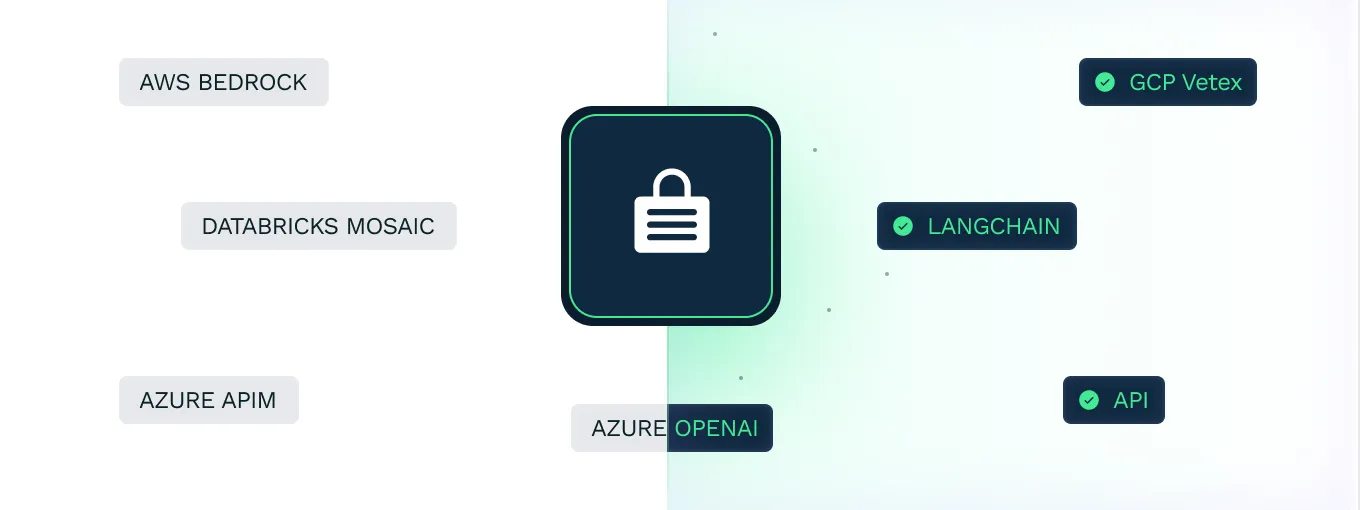

MCP and Framework Traffic Inspection

Combine safety guardrails with real time threat detection and prompt injection blocking.

Safe, predictable, and policy aligned AI

Enterprise control for both agentic and generative AI systems.

.svg)

Standardize safe use of AI

Apply consistent policies across business units.

.svg)

Prevent sensitive data leakage

Detect and redact PII and protected information.

.svg)

Ensure compliant responses

Enforce legal, regulatory, and brand requirements.

.svg)

Strengthen defenses

Combine safety guardrails with prompt attack detection.

.svg)

Maintain trust and quality

Support for leading frameworks including OpenAI, AWS Bedrock, and MCP based systems.Deliver reliable outputs that users can depend on.

"As enterprises embrace AI, security can’t be an afterthought. HiddenLayer makes it possible for CISOs to lead with confidence and keep innovation secure."

Tomas Maldonado

CISO, NFL

"Securing AI requires protection across the entire lifecycle. HiddenLayer delivers end-to-end visibility and defense so CISOs can safeguard AI at every stage."

Jerry Davis

Founder, Gryphon X

"Strong governance is critical as AI becomes embedded across enterprises. HiddenLayer provides the comprehensive framework needed to manage risk and align AI adoption with visibility, compliance, and accountability."

Gary McAlum

Prior CISO, AIG

"The integrity of AI systems is as critical as the integrity of our software supply chains. If we can't secure the building blocks of AI, we risk exposing enterprises to new classes of attack. HiddenLayer is tackling this problem at its root, delivering the protections the world needs most."

Thomas Pace

Co-Founder & CEO, NetRise

"AI introduces risks that traditional cybersecurity tools weren't built to handle. HiddenLayer's comprehensive platform consolidates what CISOs need to manage and defend the critical AI tools that enable the business."

Timothy Youngblood

CISO in Residence, Astrix Security

"AI security demands purpose-built technology and trusted partners to counter AI attack vectors. HiddenLayer arms CISOs with a comprehensive platform to identify and manage AI-specific risks, enabling organizations to innovate with confidence and at the speed of modern business."

Josh Lemos

CISO, GitLab

"One of the elements that impresses me about HiddenLayer is the elegance of their technology. Their non-invasive AIDR solution provides robust, real-time protection against adversarial attacks without ever needing to access a customer's sensitive data or proprietary models. This is a game-changer for enterprises in regulated industries like finance and healthcare, as well as federal agencies, where data privacy is paramount."

Doug Merritt Chairman

CEO & President at Aviatrix and prior CEO at Splunk

Learn from the Industry’s AI Security Experts

Research, guidance, and frameworks from the team shaping AI security standards.

min read

Claude Mythos: AI Security Gaps Beyond Vulnerability Discovery

Anthropic’s announcement of Claude Mythos and the launch of Project Glasswing may mark a significant inflection point in the evolution of AI systems. Unlike previous model releases, this one was defined as much by what was not done as what was. According to Anthropic and early reporting, the company has reportedly developed a model that it claims is capable of autonomously discovering and exploiting vulnerabilities across operational systems, and has chosen not to release it publicly.

That decision reflects a recognition that AI systems are evolving beyond tools that simply need to be secured and are beginning to play a more active role in shaping security outcomes. They are increasingly described as capable of performing tasks traditionally carried out by security researchers, but doing so at scale and with autonomy introduces new risks that require visibility, oversight, and control. It also raises broader questions about how these systems are governed over time, particularly as access expands and more capable variants may be introduced into wider environments. As these systems take on more active roles, the challenge shifts from securing the model itself to understanding and governing how it behaves in practice.

In this post, we examine what Mythos may represent, why its restricted release matters, and what it signals for organizations deploying or securing AI systems, including how these reported capabilities could reshape vulnerability management processes and the role of human expertise within them. We also explore what this shift reveals about the limits of alignment as a security strategy, the emerging risks across the AI supply chain, and the growing need to secure AI systems operating with increasing autonomy.

What Anthropic Built and Why It Matters

Claude Mythos is positioned as a frontier, general-purpose model with advanced capabilities in software engineering and cybersecurity. Anthropic’s own materials indicate that models at this level can potentially “surpass all but the most skilled” human experts at identifying and exploiting software vulnerabilities, reflecting a meaningful shift in coding and security capabilities.

According to public reporting and Anthropic’s own materials, the model is being described as being able to:

- Identify previously unknown vulnerabilities, including long-standing issues missed by traditional tooling

- Chain and combine exploits across systems

- Autonomously identify and exploit vulnerabilities with minimal human input

These are not incremental improvements. The reported performance gap between Mythos and prior models suggests a shift from “AI-assisted security” to AI-driven vulnerability discovery and exploitation. Importantly, these capabilities may extend beyond isolated analysis to interact with systems, tools, and environments, making their behavior and execution context increasingly relevant from a security standpoint.

Anthropic’s response is equally notable. Rather than releasing Mythos broadly, they have limited access to a small group of large technology companies, security vendors, and organizations that maintain critical software infrastructure through Project Glasswing, enabling them to use the model to identify and remediate vulnerabilities across both first-party and open-source systems. The stated goal is to give defenders a head start before similar capabilities become widely accessible. This reflects a shift toward treating advanced model capabilities as security-sensitive.

As these capabilities are put into practice through initiatives like Project Glasswing, the focus will naturally shift from what these models can discover to how organizations operationalize that discovery, ensuring vulnerabilities are not only identified but effectively prioritized, shared, and remediated. This also introduces a need to understand how AI systems operate as they carry out these tasks, particularly as they move beyond analysis into action.

AI Systems Are Now Part of the Attack Surface

Even if Mythos itself is not publicly available, the trajectory is clear. Models with similar capabilities will emerge, whether through competing AI research organizations, open-source efforts, or adversarial adaptation.

This means organizations should assume that AI-generated attacks will become increasingly capable, faster, and harder to detect. AI is no longer just part of the system to be secured; it is increasingly part of the attack surface itself. As a result, security approaches must extend beyond protecting systems from external inputs to understanding how AI systems themselves behave within those environments.

Alignment Is Not a Security Control

This also exposes a deeper assumption that underpins many current approaches to AI security: that the model itself can be trusted to behave as intended. In practice, this assumption does not hold. Alignment techniques, methods used to guide a model’s behavior toward intended goals, safety constraints, and human-defined rules, prompting strategies, and safety tuning can reduce risk, but they do not eliminate it. Models remain probabilistic systems that can be influenced, manipulated, or fail in unexpected ways. As systems like Mythos are expected to take on more active roles in identifying and exploiting vulnerabilities, the question is no longer just what the model can do, but how its behavior is verified and controlled.

This becomes especially important as access to Mythos capabilities may expand over time, whether through broader releases or derivative systems. As exposure increases, so does the need for continuous evaluation of model behavior and risk. Security cannot rely solely on the model’s internal reasoning or intended alignment; it must operate independently, with external mechanisms that provide visibility into actions and enforce constraints regardless of how the model behaves.

The AI Supply Chain Risk

At the same time, the introduction of initiatives like Project Glasswing highlights a dimension that is often overlooked in discussions of AI-driven security: the integrity of the AI supply chain itself. As organizations begin to collaborate, share findings, and potentially contribute fixes across ecosystems, the trustworthiness of those contributions becomes critical. If a model or pipeline within that ecosystem is compromised, the downstream impact could extend far beyond a single organization. HiddenLayer’s 2025 Threat Report highlights vulnerabilities within the AI supply chain as a key attack vector, driven by dependencies on third-party datasets, APIs, labeling tools, and cloud environments, with service providers emerging as one of the most common sources of AI-related breaches.

In this context, the risk is not just exposure, but propagation. A poisoned model contributing flawed or malicious “fixes” to widely used systems represents a fundamentally different kind of risk that is not addressed by traditional vulnerability management alone. This shifts the focus from individual model performance to the security and provenance of the entire pipeline through which models, outputs, and updates are distributed.

Agentic AI and the Next Security Frontier

These risks are further amplified as AI systems become more autonomous and begin to operate in agentic contexts. Models capable of chaining actions, interacting with tools, and executing tasks across environments introduce a new class of security challenges that extend beyond prompts or static policy controls. As autonomy increases, so does the importance of understanding what actions are being taken in real time, how decisions are made, and what downstream effects those actions produce.

As a result, security must evolve from static safeguards to continuous monitoring and control of execution. Systems like Mythos illustrate not just a step change in capability, but the emergence of a new operational reality where visibility into runtime behavior and the ability to intervene becomes essential to managing risk at scale. At the same time, increased capability and visibility raise a parallel challenge: how organizations handle the volume and impact of what these systems uncover.

Discovery Is Only Half the Equation

Finding vulnerabilities at scale is valuable, but discovery alone does not improve security. Vulnerabilities must be:

- validated

- prioritized

- remediated

In practice, this is where the process becomes most complex. Discovery is only the starting point. The real work begins with disclosure: identifying the right owners, communicating findings, supporting investigation, and ultimately enabling fixes to be deployed safely. This process is often fragmented, time-consuming, and difficult to scale.

Anthropic’s approach, pairing capability with coordinated disclosure and patching through Project Glasswing, reflects an understanding of this challenge. Detection without mitigation does not reduce risk, and increasing the volume of findings without addressing downstream bottlenecks can create more pressure than progress.

While models like Mythos may accelerate discovery, the processes that follow: triage, prioritization, coordination, and patching remain largely human-driven and operationally constrained. Simply going faster at identifying vulnerabilities is not sufficient. The industry will likely need new processes and methodologies to handle this volume effectively.

Over time, this may evolve toward more automated defense models, where vulnerabilities are not only detected but also validated, prioritized, and remediated in a more continuous and coordinated way. But today, that end-to-end capability remains incomplete.

The Human Dimension

It is also worth acknowledging the human dimension of this shift. For many security researchers, the capabilities described in early reporting on models like Mythos raise understandable concerns about the future of their role. While these capabilities have not yet been widely validated in open environments, they point to a direction that is difficult to ignore.

When systems begin performing tasks traditionally associated with vulnerability discovery, it can create uncertainty about where human expertise fits in.

However, the challenges outlined above suggest a more nuanced reality. Discovery is only one part of the security lifecycle, and many of the most difficult problems, like contextual risk assessment, coordinated disclosure, prioritization, and safe remediation, remain deeply human.

As the volume and speed of vulnerability discovery increase, the role of the security researcher is likely to evolve rather than diminish. Expertise will be needed not just to identify vulnerabilities, but to:

- interpret their impact

- prioritize response

- guide remediation strategies

- and oversee increasingly automated systems

In this sense, AI does not eliminate the need for human expertise; it shifts where that expertise is applied. The organizations that navigate this transition effectively will be those that combine automated discovery with human judgment, ensuring that speed is matched with context, and scale with control.

Defenders Must Match the Pace of Discovery

The more consequential shift is not that AI can find vulnerabilities, but how quickly it can do so.

As discovery accelerates, so must:

- remediation timelines

- patch deployment

- coordination across ecosystems

Open-source contributors and enterprise teams alike will need to operate at a pace that keeps up with automated discovery. If defenders cannot match that speed, the advantage shifts to adversaries who will inevitably gain access to similar models and capabilities. At the same time, increased speed reduces the window for direct human intervention, reinforcing the need for mechanisms that can observe and control actions as they occur, while allowing human expertise to focus on higher-level oversight and decision making.

Not All Vulnerabilities Matter Equally

A critical nuance is often overlooked: not all vulnerabilities carry the same risk. Some are theoretical, some are difficult to exploit, and others have immediate, high-impact consequences, and how they are evaluated can vary significantly across industries.

Organizations need to move beyond volume-based thinking and focus on impact-based prioritization. Risk is contextual and depends on:

- industry-specific factors

- environment-specific configurations

- internal architecture and controls

The ability to determine which vulnerabilities matter, and to act accordingly, is as important as the ability to find them.

Conclusion

Claude Mythos and Project Glasswing point to a broader shift in how AI may impact vulnerability discovery and remediation. While the full extent of these capabilities is still emerging, they suggest a future where the speed and scale of discovery could increase significantly, placing new pressure on how organizations respond.

In that context, security may increasingly be shaped not just by the ability to find vulnerabilities, nor even to fix them in isolation, but by the ability to continuously prioritize, remediate, and keep pace with ongoing discovery, while focusing on what matters most. This will require moving beyond assumptions that aligned models can be inherently trusted, toward approaches that continuously validate behavior, enforce boundaries, and operate independently of the model itself.

As AI systems begin to move from assisting with security tasks to potentially performing them, organizations will need to account for the risks introduced by delegating these responsibilities. Maintaining visibility into how decisions are made and control over how actions are executed is likely to become more important as the window for direct human intervention narrows and the role of human expertise shifts toward oversight and guidance. This includes not only securing individual models but also ensuring the integrity of the broader AI supply chain and the systems through which models interact, collaborate, and evolve.

As these capabilities continue to evolve, success may depend not just on adopting AI-driven tools but on how effectively they are operationalized, combining automated discovery with human judgment, and ensuring that detection can translate into coordinated action and measurable risk reduction. In practice, this may require security approaches that extend beyond discovery and remediation to include greater visibility and control over how AI-driven actions are carried out in real-world environments. As autonomy increases, this also means treating runtime behavior as a primary security concern, ensuring that AI systems can be observed, governed, and controlled as they act.

min read

2026 AI Threat Landscape Report

The threat landscape has shifted.

In this year's HiddenLayer 2026 AI Threat Landscape Report, our findings point to a decisive inflection point: AI systems are no longer just generating outputs, they are taking action.

Agentic AI has moved from experimentation to enterprise reality. Systems are now browsing, executing code, calling tools, and initiating workflows on behalf of users. That autonomy is transforming productivity, and fundamentally reshaping risk.In this year’s report, we examine:

- The rise of autonomous, agent-driven systems

- The surge in shadow AI across enterprises

- Growing breaches originating from open models and agent-enabled environments

- Why traditional security controls are struggling to keep pace

Our research reveals that attacks on AI systems are steady or rising across most organizations, shadow AI is now a structural concern, and breaches increasingly stem from open model ecosystems and autonomous systems.

The 2026 AI Threat Landscape Report breaks down what this shift means and what security leaders must do next.

We’ll be releasing the full report March 18th, followed by a live webinar April 8th where our experts will walk through the findings and answer your questions.

Enforce AI behavior

Implement enterprise grade guardrails for safe and compliant AI.