HiddenLayer in the News

See how our research, leadership, and innovations are shaping the global conversation on AI security.

%20(1).webp)

min read

HiddenLayer Unveils New Agentic Runtime Security Capabilities for Securing Autonomous AI Execution

Austin, TX – March 23, 2026 – HiddenLayer, the leading AI security company, today announced the next generation of its AI Runtime Security module, introducing new capabilities designed to protect autonomous AI agents as they make decisions and take action. As enterprises increasingly adopt agentic AI systems, these capabilities extend HiddenLayer’s AI Runtime Security platform to secure what matters most in agentic AI: how agents behave and take actions.

The update introduces three core capabilities for securing agentic AI workloads:

• Agentic Runtime Visibility

• Agentic Investigation & Threat Hunting

• Agentic Detection & Enforcement

One in eight AI breaches are linked to agentic systems, according to HiddenLayer’s 2026 AI Threat Landscape Report. Each agent interaction expands the operational blast radius and introduces new forms of runtime risk. Yet most AI security controls stop at prompts, policies, or static permissions, and execution-time behavior remains largely unobserved and uncontrolled.

These new agentic security capabilities give security teams visibility into how agents execute. They enable them to detect and stop risks in multi-step autonomous workflows, including prompt injection, malicious tool calls, and data exfiltration before sensitive information is exposed.

“AI agents operate at machine speed. If they’re compromised, they can access systems, move data, and take action in seconds — far faster than any human could intervene,” said Chris Sestito, CEO of HiddenLayer. “That velocity changes the security equation entirely. Agentic Runtime Security gives enterprises the real-time visibility and control they need to stop damage before it spreads.”

With these new capabilities, security teams can:

- Gain complete runtime visibility into AI agent behavior — Reconstruct every session to see how agents interact with data, tools, and other agents, providing full operational context behind every action and decision.

- Investigate and hunt across agentic activity — Search, filter, and pivot across sessions, tools, and execution paths to identify anomalous behavior and uncover evolving threats. Validated findings can be easily operationalized into enforceable runtime policies, reducing friction between investigation and response.

- Detect and prevent multi-step agentic threats — Identify prompt injections, malicious tool calls, data exfiltration, and cascading attack chains unique to autonomous agents, ensuring real-time protection from evolving risks.

- Enforce adaptive security policies in real time — Automatically control agent access, redact sensitive data, and block unsafe or unauthorized actions based on context, keeping operations compliant and contained.

“As we expand the use of AI agents across our business, maintaining control and oversight is critical,” said Charles Iheagwara, AI/ML Security Leader at AstraZeneca. "Our goal is to have full scope visibility across all platforms and silos, so we’re focused on putting capabilities in place to monitor agent execution and ensure they operate safely and reliably at scale.”

Agentic Runtime Security supports enterprises as they expand agentic AI adoption, integrating directly into agent gateways and execution frameworks to enable phased deployment without application rewrites.

“Agentic AI changes the risk model because decisions and actions are happening continuously at runtime,” said Caroline Wong, Chief Strategy Officer at Axari. “HiddenLayer’s new capabilities give us the visibility into agent behavior that’s been missing, so we can safely move these systems into production with more confidence.”

The new agentic capabilities for HiddenLayer’s AI Runtime Security are available now as part of HiddenLayer’s AI Security Platform, enabling organizations to gain immediate agentic runtime visibility and detection and expand to full threat-hunting and enforcement as their AI agent programs mature.

Find more information at hiddenlayer.com/agents and contact sales@hiddenlayer.com to schedule a demo.

min read

HiddenLayer Awarded AFWERX STTR Phase II Contract to Accelerate USA Department of Defense Security Adoption

“The opportunity to extend the use of our AISec Platform to secure the US Department of Defense’s most critical models, while also accelerating commercialization in the private sector, is a significant milestone for HiddenLayer,” said Chris "Tito" Sestito, Co-Founder and CEO of HiddenLayer. “We are honored to collaborate with AFWERX and researchers at the University at Buffalo to advance research on adversarial AI attack methods, further driving our mission to help enterprises protect their most valuable technology.”

Program includes research collaboration with the University at Buffalo

AUSTIN, Texas - April 9, 2024 - HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, today announced it has been selected by AFWERX for a STTR Phase II contract in the amount of $1.8MM focused on AI Detection & Response to address the most pressing challenges in the Department of the Air Force (DAF). The Air Force Research Laboratory and AFWERX have partnered to streamline the Small Business Innovation Research (SBIR) and Small Business Technology Transfer (STTR) process by accelerating the small business experience through faster proposal to award timelines, changing the pool of potential applicants by expanding opportunities to small business and eliminating bureaucratic overhead by continually implementing process improvement changes in contract execution. The DAF began offering the Open Topic SBIR/STTR program in 2018 which expanded the range of innovations the DAF funded and as of February 9, 2024, HiddenLayer began its journey to create and provide innovative capabilities that will strengthen the national defense of the United States of America.

“The opportunity to extend the use of our AISec Platform to secure the US Department of Defense’s most critical models, while also accelerating commercialization in the private sector, is a significant milestone for HiddenLayer,” said Chris "Tito" Sestito, Co-Founder and CEO of HiddenLayer. “We are honored to collaborate with AFWERX and researchers at the University at Buffalo to advance research on adversarial AI attack methods, further driving our mission to help enterprises protect their most valuable technology.”

The views expressed are those of the author and do not necessarily reflect the official policy or position of the Department of the Air Force, the Department of Defense, or the U.S. government.

About HiddenLayer

HiddenLayer is the leading provider of security for AI. Its security platform helps enterprises safeguard the machine learning models behind their most important products. HiddenLayer is the only company to offer turnkey security for AI that does not add unnecessary complexity to models and does not require access to raw data and algorithms. Founded by a team with deep roots in security and ML, HiddenLayer aims to protect enterprise’s AI from inference, bypass, extraction attacks, and model theft. The company is backed by a group of strategic investors, including M12, Microsoft's Venture Fund, Moore Strategic Ventures, Booz Allen Ventures, IBM Ventures, and Capital One Ventures.

About Air Force Research Laboratory (AFRL)

The Air Force Research Laboratory is the primary scientific research and development center for the Department of the Air Force. AFRL plays an integral role in leading the discovery, development, and integration of affordable warfighting technologies for our air, space and cyberspace force. With a workforce of more than 12,500 across nine technology areas and 40 other operations across the globe, AFRL provides a diverse portfolio of science and technology ranging from fundamental to advanced research and technology development. For more information, visit www.afresearchlab.com.

About AFWERX

As the innovation arm of the DAF and a directorate within the Air Force Research Laboratory, AFWERX brings cutting-edge American ingenuity from small businesses and start-ups to address the most pressing challenges of the DAF. AFWERX employs approximately 325 military, civilian and contractor personnel at six hubs and sites executing an annual $1.4 billion budget. Since 2019, AFWERX has executed 4,697 contracts worth more than $2.6 billion to strengthen the U.S. defense industrial base and drive faster technology transition to operational capability. For more information, visit: www.afwerx.com.

Contact

David Sack

SutherlandGold for HiddenLayer

hiddenlayer@sutherlandgold.com

min read

HiddenLayer Adds AI Detection & Response for Generative AI

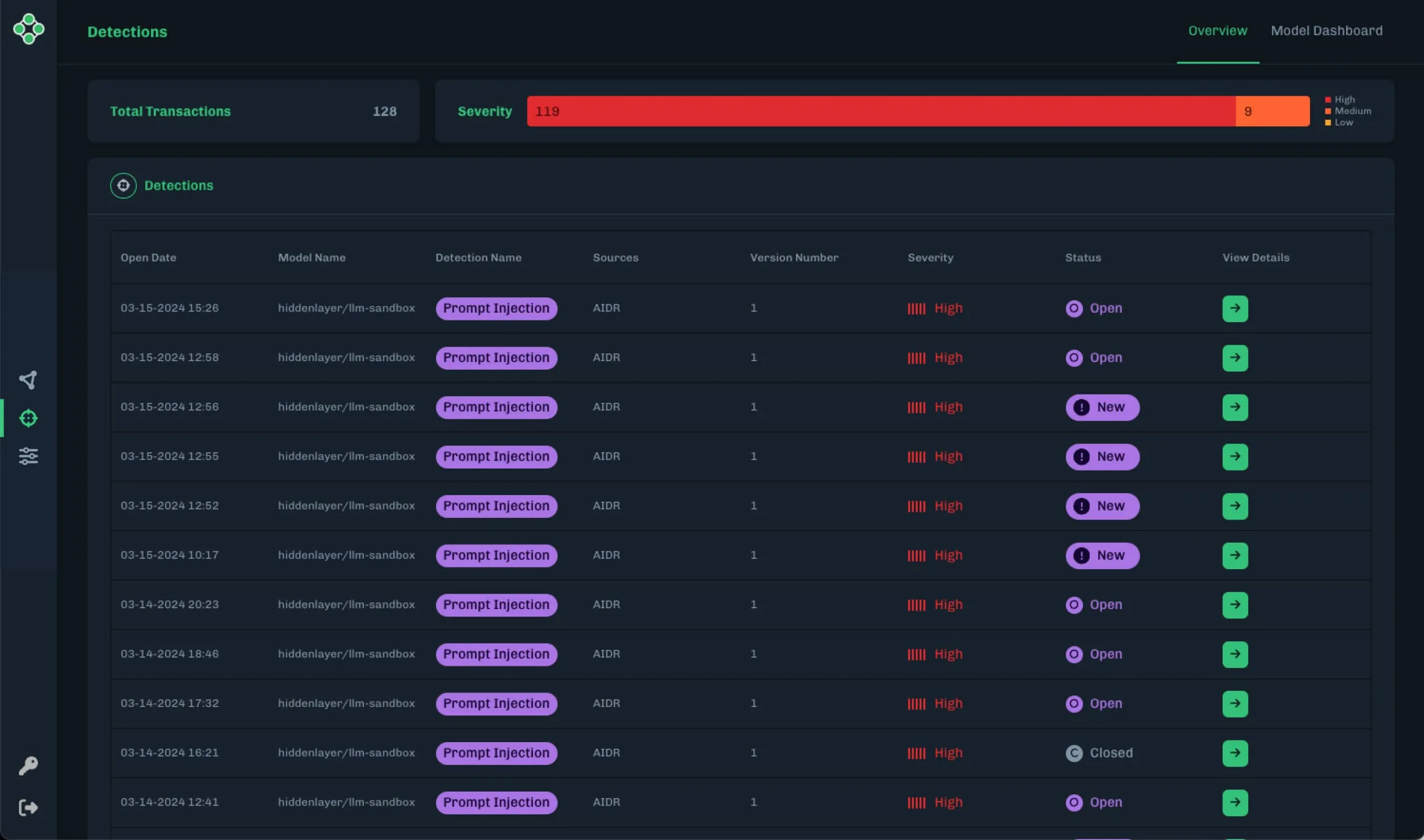

HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, today announced the launch of its latest product: AI Detection & Response for Generative AI. The new capability comes as part of HiddenLayer’s award-winning platform, formerly known as MLDR, extending HiddenLayer’s end-to-end security to organizations deploying LLM-based applications.

Expanding AISec Platform to Facilitate Adoption of LLMs with Enterprise-Grade Security

AUSTIN, Texas - March 19, 2024 - HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, today announced the launch of its latest product: AI Detection & Response for Generative AI. The new capability comes as part of HiddenLayer’s award-winning platform, formerly known as MLDR, extending HiddenLayer’s end-to-end security to organizations deploying LLM-based applications.

HiddenLayer's AI Detection & Response for Generative AI provides a set of security controls that enable real-time monitoring, detection, and response to threats specific to LLMs. The system supports a majority of LLMs, including GPT-X, LlaMa, Mistral, and internally built LLMs out-of-the-box, and allows for the interception of traffic to and from LLM applications, offering the capability to block harmful transactions or generate alerts for security teams to take necessary actions. This ensures that LLM deployments can be managed securely, mitigating the risk of data leaks, malicious use, and other abuses.

“HiddenLayer's AI Detection & Response allows organizations to responsibly navigate the risks associated with Generative AI, facilitating safe adoption of AI across industries,” said Chris "Tito" Sestito, Co-Founder and CEO of HiddenLayer. “By empowering CISOs and security leaders to bring Generative AI technologies to their organizations with responsible controls, this launch stands as the latest step in our mission to help enterprises protect their most valuable technology.”

The launch comes on the heels of the release of HiddenLayer’s AI Threat Landscape Report, which found that AI adoption continues to accelerate without proper security measures. With 98% of surveyed companies considering at least some of their AI models crucial to their business success, and 77% identifying breaches to their AI in the past year, the need to protect and secure all forms of AI is clear.

HiddenLayer’s AI Detection & Response fortifies organizations’ generative AI deployments against unauthorized access, infiltration attempts, and intellectual property theft - all while delivering real-time protection. The platform is automated, enabling it to recognize real-time attacks and respond to generative AI model breach attempts with speed, and can be easily deployed and integrated into existing MLOps frameworks and security tools in minutes, not days. Furthermore, the platform is scalable, providing clear reporting on detected threats, empowering security teams with insights into adversarial behavior.

Organizations leveraging HiddenLayer’s AI Detection & Response will see the following outcomes:

- Immediate and continuous real-time protection against cyber threats as outlined in 3rd party frameworks, including MITRE ATLAS and LLM OWASP.

- Unleashed innovation, enabling the quick deployment of models into production, while proactively mitigating cybersecurity risks in real-time as part of the MLOps Lifecycle, ensuring a secure and efficient workflow.

- Assistance in maintaining compliance as safeguards against threats that could result in regulatory issues.

- An organization empowered to safely and securely embrace modernization through the transformative capabilities of generative AI.

Learn more about HiddenLayer’s AI Detection & Response for Generative AI capability here.

About HiddenLayer

HiddenLayer is the leading provider of security for AI. Its security platform helps enterprises safeguard the machine learning models behind their most important products. HiddenLayer is the only company to offer turnkey security for AI that does not add unnecessary complexity to models and does not require access to raw data and algorithms. Founded by a team with deep roots in security and ML, HiddenLayer aims to protect enterprise’s AI from inference, bypass, extraction attacks, and model theft. The company is backed by a group of strategic investors, including M12, Microsoft's Venture Fund, Booz Allen Ventures, IBM Ventures, and Capital One Ventures.

Contact

David Sack

SutherlandGold for HiddenLayer

hiddenlayer@sutherlandgold.com

min read

HiddenLayer AI Threat Landscape Report Finds That 77% of Companies Identified Breaches to Their AI in the Past Year

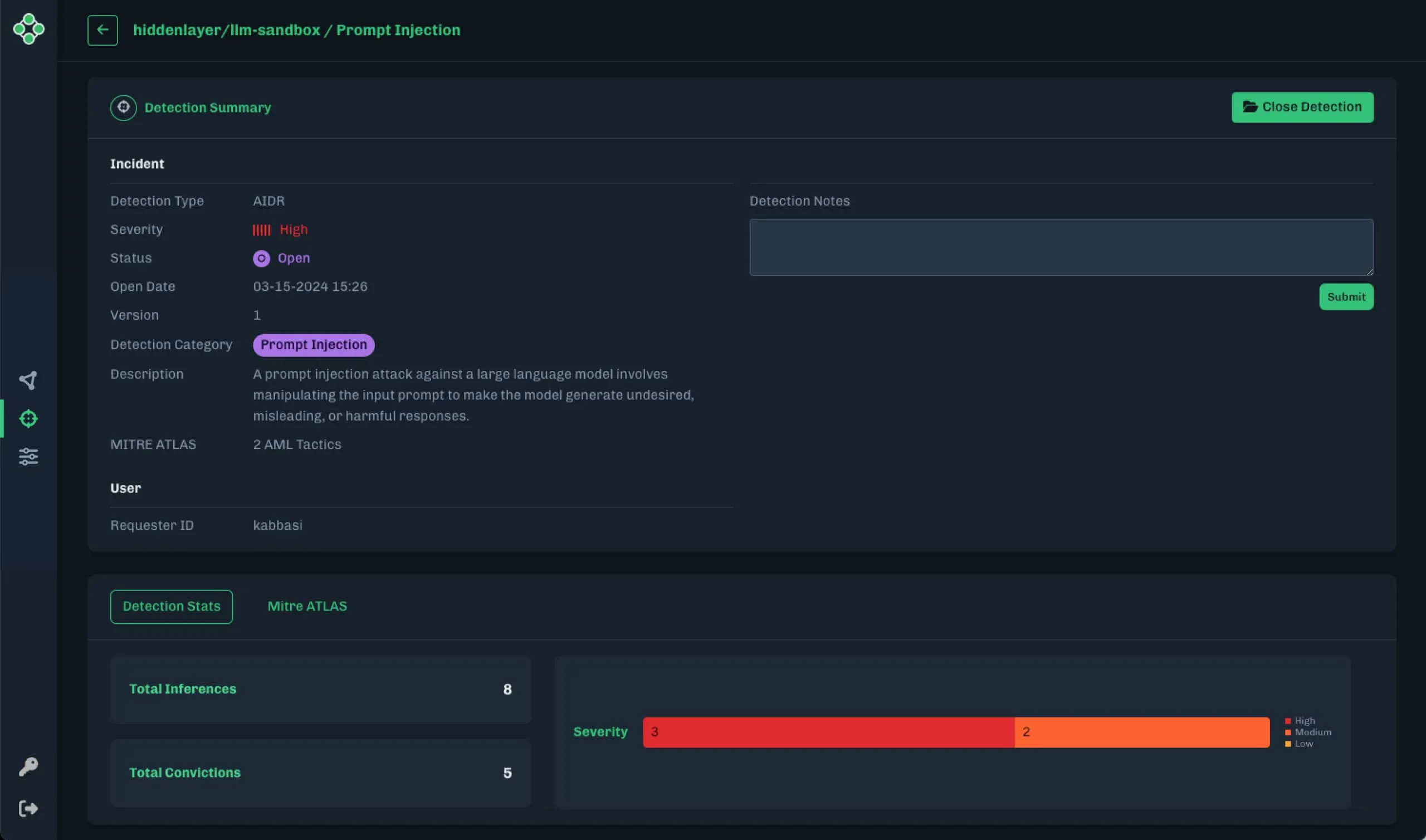

HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, today released its inaugural AI Threat Landscape Report highlighting the pervasive use of AI and the risks involved in its deployment. Nearly all surveyed companies, 98%, consider at least some of their AI models crucial to their business success, and 77% identified breaches to their AI in the past year. Yet only 14% of IT leaders said their respective companies are planning and testing for adversarial attacks on AI models.

Survey of security leaders shows AI adoption is accelerating without proper security measures

AUSTIN, Texas - March 6, 2024 - HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, today released its inaugural AI Threat Landscape Report highlighting the pervasive use of AI and the risks involved in its deployment. Nearly all surveyed companies, 98%, consider at least some of their AI models crucial to their business success, and 77% identified breaches to their AI in the past year. Yet only 14% of IT leaders said their respective companies are planning and testing for adversarial attacks on AI models.

The report surveyed 150 IT security and data science leaders to shed light on the biggest vulnerabilities impacting AI today, their implications on commercial and federal organizations, and cutting-edge advancements in security controls for AI in all its forms.

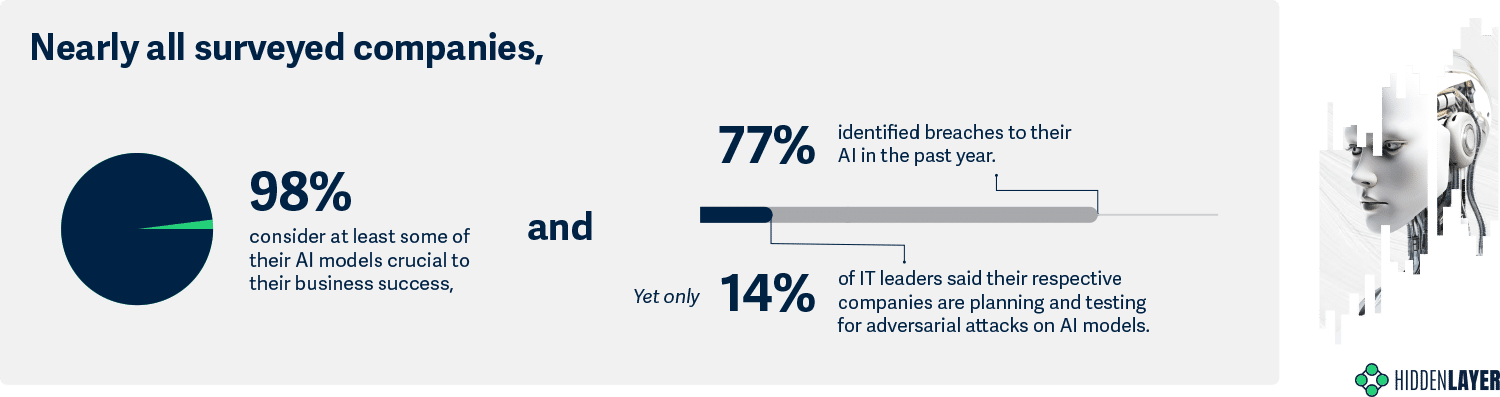

The survey uncovered AI’s widespread utilization by today’s businesses as companies have, on average, a staggering 1,689 AI models in production. In response, security for AI has become a priority, with 94% of IT leaders allocating budgets to secure their AI in 2024. Yet only 61% are highly confident in their allocation, and 92% are still developing a comprehensive plan for this emerging threat. These findings reveal the need for support in implementing security for AI.

“AI is the most vulnerable technology ever to be deployed in production systems,” said Chris "Tito" Sestito, Co-Founder and CEO of HiddenLayer. “The rapid emergence of AI has resulted in an unprecedented technological revolution, of which every organization in the world is affected. Our first-ever AI Threat Landscape Report reveals the breadth of risks to the world’s most important technology. HiddenLayer is proud to be on the front lines of research and guidance around these threats to help organizations navigate the security for AI landscape.”

Risks Involved with AI Use

Adversaries can leverage a variety of methods to utilize AI to their advantage. The most common risks of AI usage include:

- Manipulation to give biased, inaccurate, or harmful information.

- Creation of harmful content, such as malware, phishing, and propaganda.

- Development of deep fake images, audio, and video.

- Leveraged by malicious actors to provide access to dangerous or illegal information.

Common Types of Attacks on AI

There are three major types of attacks on AI:

- Adversarial Machine Learning Attacks target AI algorithms, aimed to alter AI’s behavior, evade AI-based detection, or steal the underlying technology.

- Generative AI System Attacks threaten AI’s filters and restrictions, intended to generate content deemed harmful or illegal.

- Supply Chain Attacks attack ML artifacts and platforms with the intention of arbitrary code execution and delivery of traditional malware.

Challenges to Securing AI

While industries are reaping the benefits of increased efficiency and innovation thanks to AI, many organizations do not have proper security measures in place to ensure safe use. Some of the biggest challenges reported by organizations in securing their AI include:

- Shadow IT: 61% of IT leaders acknowledge shadow AI, solutions that are not officially known or under the control of the IT department, as a problem within their organizations.

- Third-Party AIs: 89% express concern about security vulnerabilities associated with integrating third-party AIs, and 75% believe third-party AI integrations pose a greater risk than existing threats.

Best Practices for Securing AI

HiddenLayer has outlined recommendations for organizations to begin securing their AI, including:

- Discovery and Asset Management: Begin by identifying where AI is already used in your organization. What applications has your organization already purchased that use AI or have AI-enabled features?

- Risk Assessment and Threat Modeling: Perform threat modeling to understand the potential vulnerabilities and attack vectors that could be exploited by malicious actors to complete your understanding of your organization’s AI risk exposure.

- Data Security and Privacy: Go beyond the typical implementation of encryption, access controls, and secure data storage practices to protect your AI model data. Evaluate and implement security solutions that are purpose-built to provide runtime protection for AI models.

- Model Robustness and Validation: Regularly assess the robustness of AI models against adversarial attacks. This involves pen-testing the model's response to various attacks, such as intentionally manipulated inputs.

- Secure Development Practices: Incorporate security into your AI development lifecycle. Train your data scientists, data engineers, and developers on the various attack vectors associated with AI.

- Continuous Monitoring and Incident Response: Implement continuous monitoring mechanisms to detect anomalies and potential security incidents in real-time for your AI, and develop a robust AI incident response plan to quickly and effectively address security breaches or anomalies.

HiddenLayer’s products and services accelerate the process of securing AI, with its AISec Platform providing a comprehensive AI security solution that ensures the integrity and safety of models throughout an organization's MLOps pipeline. HiddenLayer also provides its Machine Learning Detection & Response (MLDR), which enables organizations to automate and scale the protection of AI models and ensure their security in real-time, and its Model Scanner, which enables companies to evaluate the security and integrity of their ML artifacts before deploying them.

For more information, view the full report here.

About HiddenLayer

HiddenLayer is the leading provider of security for AI. Its security platform helps enterprises safeguard the machine learning models behind their most important products. HiddenLayer is the only company to offer turnkey security for AI that does not add unnecessary complexity to models and does not require access to raw data and algorithms. Founded by a team with deep roots in security and ML, HiddenLayer aims to protect enterprise’s AI from inference, bypass, extraction attacks, and model theft. The company is backed by a group of strategic investors, including M12, Microsoft's Venture Fund, Booz Allen Ventures, IBM Ventures, and Capital One Ventures.

Contact

David Sack

SutherlandGold for HiddenLayer

hiddenlayer@sutherlandgold.com

min read

HiddenLayer with OpenPolicy Announces Participation in the Department of Commerce Consortium Dedicated to AI Safety

HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, announced that via the OpenPolicy AI Coalition, it joins in the participation of more than 200 of the nation’s leading artificial intelligence (AI) stakeholders to participate in a Department of Commerce initiative to support the development and deployment of trustworthy and safe AI. Established by the Department of Commerce’s National Institute of Standards and Technology (NIST), the U.S. AI Safety Institute Consortium (AISIC) will bring together AI creators and users, academics, government and industry researchers, and civil society organizations to meet this mission.

HiddenLayer, alongside OpenPolicy AI Coalition members, is excited to partner with the NIST U.S. AI Safety Institute Consortium (AISIC).

AUSTIN, Texas - February 8, 2024 - Today HiddenLayer, the leading security provider for artificial intelligence (AI) models and assets, announced that via the OpenPolicy AI Coalition, it joins in the participation of more than 200 of the nation’s leading artificial intelligence (AI) stakeholders to participate in a Department of Commerce initiative to support the development and deployment of trustworthy and safe AI. Established by the Department of Commerce’s National Institute of Standards and Technology (NIST), the U.S. AI Safety Institute Consortium (AISIC) will bring together AI creators and users, academics, government and industry researchers, and civil society organizations to meet this mission.

“We proudly join the NIST Artificial Intelligence Safety Institute Consortium alongside a coalition of AI innovators brought together by OpenPolicy, supporting the trusted deployment of AI and advancing the administration’s policy goals,” said Chris Sestito, CEO & Co-Founder, HiddenLayer. “Our mission to provide the most comprehensive security solution for AI is rooted in our commitment to protect government, industry, and society at large from all emerging AI threats.”

“OpenPolicy and its coalition of innovative AI companies are honored to take part in AISIC. The launch of the U.S. Artificial Intelligence Safety Institute is a necessary step forward in ensuring the trusted deployment of AI, and achieving the administration’s AI policy goals,” said Dr. Amit Elazari, CEO and Co-Founder, OpenPolicy. “Supporting the trusted deployment and development of AI entails supporting the development of cutting-edge innovative solutions needed to protect government, industry, and society from emerging AI threats. Innovative companies stand at the forefront of developing leading security, safety, and trustworthy AI and privacy solutions, and these are the communities we represent. Our AI coalition is committed to supporting the U.S. government and implementing agencies in this effort and will provide research, frameworks, benchmarks, policy support, and tooling to advance the trusted deployment and development of AI.”

“The U.S. government has a significant role to play in setting the standards and developing the tools we need to mitigate the risks and harness the immense potential of artificial intelligence. President Biden directed us to pull every lever to accomplish two key goals: set safety standards and protect our innovation ecosystem. That’s precisely what the U.S. AI Safety Institute Consortium is set up to help us do,” said Secretary Raimondo. “Through President Biden’s landmark Executive Order, we will ensure America is at the front of the pack – and by working with this group of leaders from industry, civil society, and academia, together we can confront these challenges to develop the measurements and standards we need to maintain America’s competitive edge and develop AI responsibly.”

The consortium includes more than 200 member companies and organizations that are on the frontlines of developing and using AI systems, as well as the civil society and academic teams that are building the foundational understanding of how AI can and will transform our society. These entities represent the nation’s largest companies and innovative startups; creators of the world’s most advanced AI systems and hardware; key members of civil society and the academic community; and representatives of professions with deep engagement in AI’s use today. The consortium also includes state and local governments, as well as non-profits. The consortium will also work with organizations from like-minded nations that have a key role to play in setting interoperable and effective safety around the world.

The full list of consortium participants is available here.

About HiddenLayer

HiddenLayer, a Gartner-recognized AI Application Security company, helps enterprises safeguard the machine learning models behind their most important products with a comprehensive security platform. Only HiddenLayer offers turnkey security for AI that does not add unnecessary complexity to models and does not require access to raw data and algorithms. Founded in March of 2022 by experienced security and ML professionals, HiddenLayer is based in Austin, Texas. For additional information, including product updates and the latest research reports, visit www.hiddenlayer.com.

Contacts

Hannah Williams

SutherlandGold for HiddenLayer

hiddenlayer@sutherlandgold.com

Let’s Secure AI Together

Join HiddenLayer in shaping the standards, defenses, and future of AI security. Whether you’re a researcher, partner, or enterprise innovator, we’re stronger together.